Tanzu Standard is now available with the VMware Cloud Provider program. Last month we released VMware Cloud Director 10.3.1 with Container Service Extension 3.1.1, which brings support to provide production-ready Kubernetes Clusters for Managed Service or Kubernetes as a Service with Tanzu Kubernetes Grid(TKG) Clusters.

This blog post covers a technical overview of Tanzu Standard components with VMware Cloud Director(10.3.1), VMware Tanzu Mission Control(Service through Cloud Partner Navigator), and Container Service Extension(3.1.1).

Container Service Extension 3.1.1:

The Container Service Extension 3.1.1 provides the runtime for TKG clusters with three plugins – Container Network Interface(CNI), Container Storage Interface(CSI), and Cloud Provider Interface(CPI).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

bhatts@bhatts-a03 ~ % kubectl get pods -n kube-system NAME READY STATUS RESTARTS AGE antrea-agent-77wvh 2/2 Running 0 2d19h antrea-agent-gwp8z 2/2 Running 0 2d19h antrea-agent-s4mrm 2/2 Running 0 2d19h antrea-agent-xxl22 2/2 Running 0 2d19h antrea-controller-5456b989f5-696lr 1/1 Running 0 2d19h coredns-76c9c76db4-l8gbb 1/1 Running 0 2d19h coredns-76c9c76db4-mxdwk 1/1 Running 0 2d19h csi-vcd-controllerplugin-0 3/3 Running 0 2d19h csi-vcd-nodeplugin-54f7x 2/2 Running 0 2d19h csi-vcd-nodeplugin-67mcd 2/2 Running 0 2d19h csi-vcd-nodeplugin-n7sxq 2/2 Running 0 2d19h etcd-mstr-5h90 1/1 Running 0 2d19h kube-apiserver-mstr-5h90 1/1 Running 0 2d19h kube-controller-manager-mstr-5h90 1/1 Running 1 2d19h kube-proxy-67t8n 1/1 Running 0 2d19h kube-proxy-d92z6 1/1 Running 0 2d19h kube-proxy-fc8xt 1/1 Running 0 2d19h kube-proxy-gjsq8 1/1 Running 0 2d19h kube-scheduler-mstr-5h90 1/1 Running 0 2d19h vmware-cloud-director-ccm-5489b6788c-kc9dr 1/1 Running 1 2d18h |

CSE provisioned Tanzu Kubernetes Grid Cluster on VMware Cloud Director

Container Storage Interface(CSI):

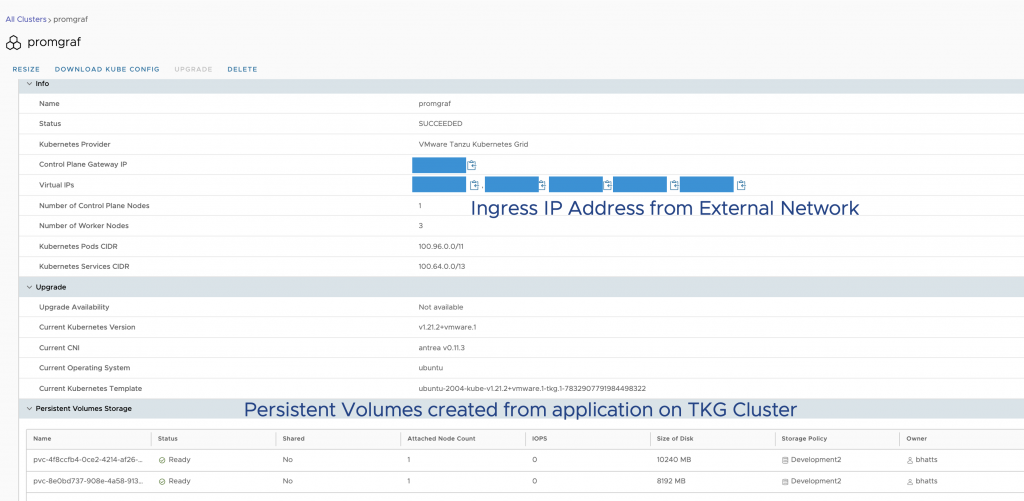

We can create TKG clusters with the Storage plugin(CSI) to support dynamic creation and deletion of Persistent Volume with Kubernetes Clusters. A lot of applications with a Database at their core require persistent storage to maintain application data. Also, the volumes created by the apps make additional storage disks without adding to existing cluster resources requirements. With Integrated CSI plugin and PV support, customers’ application data Persists upon changes in the TKG cluster and pods for stateful applications.

Rights for Persistent Volumes:

Provider requires to configure additional rights to customer org for Persistent Volume creation. Tenant admin can add those other capabilities to the cluster author role managing the Tanzu Kubernetes clusters. The Container Service Extension Official documentation page describes rights and roles and their functions here.

To allow apps to create PVC, cluster-admin must define storage class, for example, found here. Once the cluster author provides a storage class, it can be set as the default storage class for all apps on the clusters. The TKG cluster author then can configure this as a storage class for the apps. In this example, I am configuring a WordPress app through Bitnami Helmchart.

|

1 2 3 4 5 6 7 8 |

bhatts@bhatts-a03 ~ % kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-4f8ccfb4-0ce2-4214-af26-30ac611ed735 10Gi RWO Delete Bound b4f921b8-8007-4aae-8758-d26eb72dc77e/wordpress vcd-disk-dev 28m pvc-8e0bd737-908e-4a58-913a-64f8fb2f8439 8Gi RWO Delete Bound b4f921b8-8007-4aae-8758-d26eb72dc77e/data-wordpress-mariadb-0 vcd-disk-dev 29m bhatts@bhatts-a03 ~ % kubectl get pvc -A NAMESPACE NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE b4f921b8-8007-4aae-8758-d26eb72dc77e data-wordpress-mariadb-0 Bound pvc-8e0bd737-908e-4a58-913a-64f8fb2f8439 8Gi RWO vcd-disk-dev 31m b4f921b8-8007-4aae-8758-d26eb72dc77e wordpress Bound pvc-4f8ccfb4-0ce2-4214-af26-30ac611ed735 10Gi RWO vcd-disk-dev 31m |

Created Persistent Volume and Claims by WordPress app on TKG Cluster

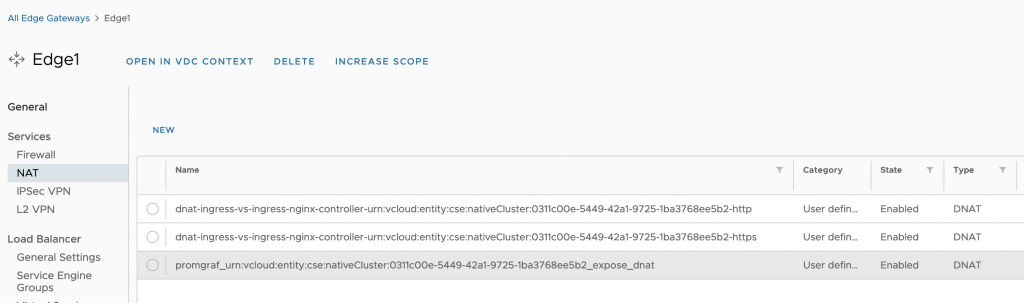

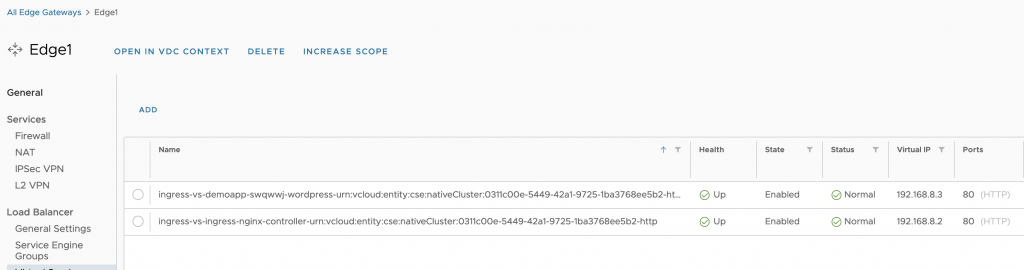

Cloud Provider Interface(CPI):

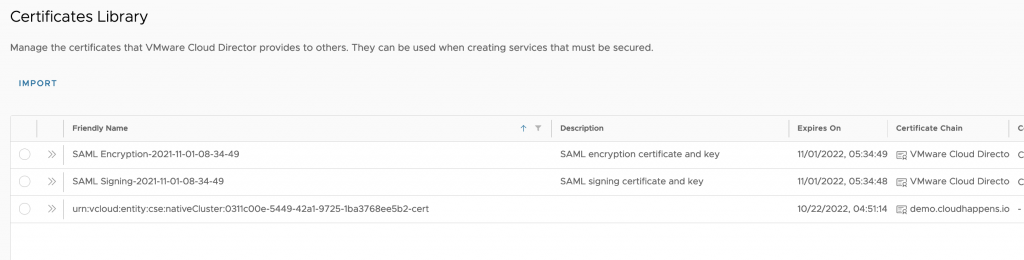

Cloud Provider Interface provides a control for networking functions specific to Ingress services with VMware Cloud Director and NSX-T Advanced Load balancer. The CCM pod for CPI works with VMware Cloud Director to create NAT rules and NSX-T Advanced LB to automate Load balancer Service. Secure ingress access (HTTPS) for guest services is provided by uploading an SSL certificate with the name of Kubernetes Cluster.

Rights for Load Balancer Service Automation

Provider needs to publish additional rights for automated Load Balancing to customer organization. Tenant admin needs to provide these capabilities to the Tanzu Kubernetes cluster author role. The provider admin also must prepare NSX-T Advanced LB with VMware Cloud Director as described here

Container Network Interface(CNI):

The Tanzu Kubernetes clusters include Antrea as a Network plugin. To read more about Antrea Network Plugin, please access the resources here. The CNI Antrea plugin has been supported from Container Service Extension Release 3.0.4.

CSE Server Greenfield Install Upgrades for Tanzu Kubernetes Grig Clusters

There are additional improvements for greenfield installation of Container service extension. CSE server’s greenfield installation:

CSE Server Install:

The server installation step includes setting up the CSE server, connecting the CSE server with the VMware Cloud Director provider portal, and uploading TKG and Native templates to the VMware Cloud Director catalog.

Tenant Onboarding

The tenant onboarding includes publishing rights bundles to the customer organization, enabling Container Service Extension UI plugin, and enabling customer Organization by CSE server.

Container Service Extension provides TKG Runtime by uploading TKG OVAs to the Cloud provider’s shared catalog. The providers can download these templates from the customer service portal.

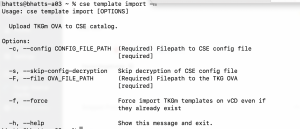

The “cse template import” command allows providers to upload TKG templates to defined shared catalog on config. YAML file

The new field “no_vc_communication=true” is introduced in the CSE server configuration, removing the dependency from the vCenter Server infromation for TKG clusters. The new field value concedes that the CSE server only communicates with VMware Cloud Director Portal without communicating with the underlying vCenter Server.

Tanzu Mission Control and Data Protection

Tanzu Mission Control Standard Edition is included with Tanzu Standard. Tanzu Mission control can be accessed from the Cloud Partner Navigator customer portal to manage Policies, Data Protection, Image Registries, and many more use cases. The customer users can attach the CSE provisioned TKG clusters to Tanzu Mission Control and leverage Data Protection functionality with Persistent Volumes. The Data Protection with Tanzu Mission Control is described here

Registry, Logging, and Monitoring

Cloud Providers can leverage Bitnami Content Catalog for various Kubernetes eco-system components like Harbor Registry, Prometheus, and Grafana for Logging, and Monitoring. For these applications, the TKG cluster author can use CPI version 1.0.2 documented here. To apply the latest CPI version, we can update Pod to use the 1.0.2 version as follows:

kubectl get pods -n kube-system (Fetch pod name containing 'vmware-cloud-director-ccm')Edit the pod content by executing kubectl edit pod -n <vmware-cloud-director-ccm-xxxx>Replace existing 'image:' content with projects.registry.vmware.com/vmware-cloud-director/cloud-provider-for-cloud-director:1.0.2

To summarize, CSE 3.1.1 with VMware Cloud Director provides Tanzu Standard for Cloud Provider for Kubernetes as a service and leverage Tanzu Standard components such as Tanzu Mission Control, Harbor for Registry, Prometheus Operator with Grafana from Bitnami Helm chart.

Further Reading: