The Long Preamble (Feel free to skip if you find my jokes dry :))

To be sure that we start off on the same page (or start off reading the same book, at least), let me draw your attention to the operative word in the title of this Post – Badly.

Now that we have gotten that out of the way, let’s begin today’s Sermon by opening our “Thou Shall Not” Book to Chapter “Convenience”. Because, you see, however much one likes to dress it up, at the end of the day, the “HotAdd CPU” feature in modern computing is mainly for Administrative convenience.

The ability to plug in (or logically add on) additional Processors to a Computer while the Computer is up and running, and for the Operating System to instantly recognize the newly-added Processors AND make them usable by the processes, tasks, or applications running in the OS is very attractive to Systems Administrators because it does, indeed, save time and eliminates the hassles and inconvenience of having to interrupt running tasks, shutting down the computer, putting in the new Processors and then powering up the machine.

Not only that, but it also saves money. How? Well, we can run the System with fewer Processors (more cheaply, you see?) for as long as possible until additional Processing power is required, at which point we add only as much as necessary to get by at that point. Because, thanks to this wonderful feature, we can do it incrementally, on-demand, without having to interrupt services or take down the workloads.

We can’t blame Administrators who celebrate and swear by “HotAdd CPU”. Everyone is busy these days and, with cost-cutting being a full-time job for Corporate Officers, any savings in time and effort an Admin can get is a welcome relief. Or, is it?

I ask because, come to think of it, if we were to consult the Official List of the Main Responsibilities of a SysAdmin, “Convenience/Relief” will most likely be a footnote in page number second-to-the-last. Right up there on Page 1 will be a bullet list of things about the Applications, Services, or whatever money-making, problem-solving solution is hosted in the OS inside that box/VM. It’s all about the Applications. The health and continued availability of the applications is the main reason a SysAdmin has a job in the first place.

So, why did I say all that? I did just so I can tell you that when the choice of doing something falls between Administrative Convenience and Applications Stability and Performance, there is NO CHOICE. Whatever hurts the Applications has to go, however much it worms the Sys Admin’s cockles.

Enabling CPU HotAdd on a VM running Windows Server (up to and including Windows Server 2019) hurts Windows. By hurting Windows, it hurts the applications hosted thereon. That. Is. All. That’s the Sermon.

The take-home message is this: Do not enable HotAdd CPU for any VM running any released Windows Server version as of the date of this writing – Windows 10 Build 20348 is the only exception to the rule at this time, But, hey! Windows 10 is something we almost never mention or care about in this Temple, so… there.

Now, The (Boring) Technical Stuff…

Related KB: Enabling vCPU HotAdd creates fake NUMA nodes on Windows (83980) (vmware.com)

VMware vSphere supports the dynamic addition of virtual CPUs to a VM while the VM is powered on. Some modern Guest Operating Systems have the capabilities to detect these additional vCPUs without the need to reboot. Likewise, some applications inside the Guest Operating System are able to detect the new vCPUs and dynamically make use of them for their needs without service interruption. At a high level, this feature enhances VM, GOS, Applications, and Service availability by minimizing the administrative interventions required to respond to a situation in which increased loads starve an Application of the Compute resources required to service the loads. Admins are able to address the resource starvation, all without a GOS reboot or application restart.

Microsoft Windows is one such GOS, and Microsoft SQL Server is one of the few Enterprise-class Applications which are able to leverage the benefits of CPU HotAdd. When you hot-add vCPUs to a VM running modern version of Windows and recent versions of MS SQL Server, Windows auto-detects the vCPUs and makes them available to the SQLOS. In the past, restarting MS SQL Server Service is usually required in order to enable it to begin to use the new processors. However, even that is no longer necessary in the MS SQL Server world – all that is required is a “RECONFIGURE” statement, and, Boom! Very convenient.

Achtung! Segue Ahead!

There is a catch, though. In non-technical terms, it’s a nasty one. To describe it, we have to take a detour and talk (briefly) about NUMA. Yes, I know… I’ll be brief. I promise.

Non-Uniform Memory Access (NUMA) can no longer be described as “New” anymore. It’s now as old as many SysAdmins. NUMA is how modern computer architecture arranges the CPUs and Memory in a Computer optimally for the maximum performance possible. This, at a high level, arranges the Memory Banks in such a way that some are in close proximity to a given pool of CPUs and the rest are in close proximity to other pools. Memories and CPU that are arranged/grouped into close proximity constitute a “Node”. When CPUs and Memories are close together, executing instructions becomes that much faster. Faster than if the instruction has to be serviced by memories from somewhere far away across the interconnect. NUMA improves performance by enabling GOS and Applications to minimize the round-trips it takes to perform a task or execute an instruction. The closer the Memory is to the CPU, the more performant the System for that unit of execution.

End. Of. Segway.

In VMware vSphere (specifically not referencing any other Platform which may or may not exist), the Windows GOS creates a phantom NUMA Node whenever CPU Hot-Add is enabled on a VM. This happens regardless of the number of vCPUs allocated to the VM. The phantom Node (usually Node 1) contains a very tiny slice of all Memories available to the VM – if it contains any at all. All Memory used by the CPUs in this Node comes from Node 0. This deviates from the expected behavior. Sadly, it is the reality and current state of things as mentioned in the “Preamble” section above.

Now, imagine your MS SQL Server instance loaded with 128 vCPUs and 1 TB of RAM, and queries/transactions scheduled on 64 of those CPUs have to travel across the interconnect to fetch memories to service those requests. When the performance doesn’t meet expectations, the obvious thing to do is add more RAM and Processors. Until you give up and take the easy way out – roll back to Physical Server hardware. Bad news, the same behavior awaits the workload on that side, too. Because you see, this is not a hypervisor issue. It’s Windows.

This is one of those performance inhibitors which are usually not obvious to the Administrator/Operator and it’s not easily detectable in the normal course of operation. This is made more so because the expected and advertised behavior of VMware vSphere is that enabling HotAdd CPU on a VM disables virtual NUMA (vNUMA) on the VM. This has been interpreted (wrongly) to mean that Windows will not see NUMA capabilities on the VM. The following presents the observation in more details:

Details:

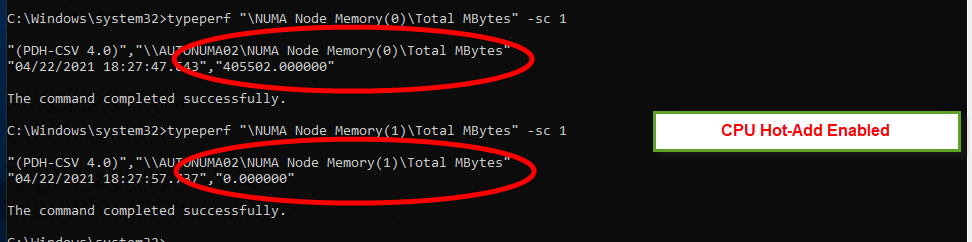

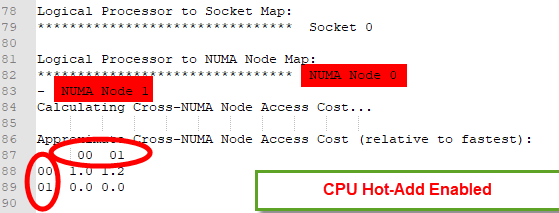

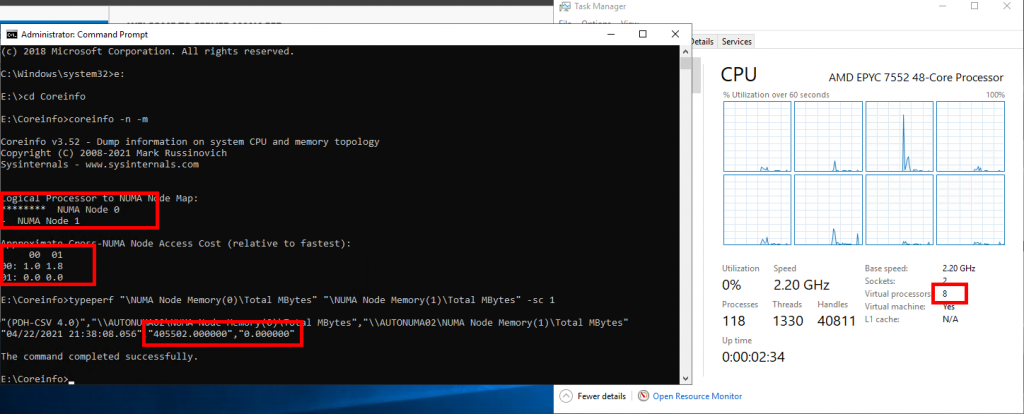

With CPU Hot-Add Enabled, Windows reports 2 NUMA Nodes, where one of the Nodes (Node 1) has ZERO Local Memory.

TypePerf reports this:

CoreInfo reports the same:

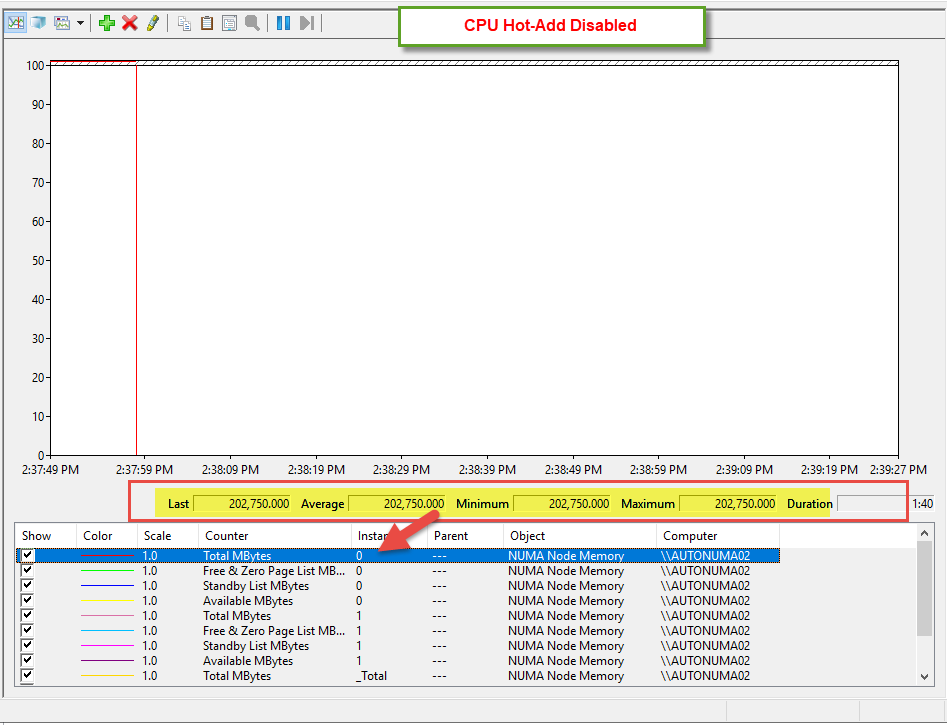

We can also observe this in Perfmon:

Other Observations:

- In addition, when CPU Hot-Add is enabled, vNUMA appears to be disabled and we are unable to see NUMA Topology in Windows Task Manager.

- However, this appears to be true only AS LONG AS the VM does not have more than 64 vCPUs

But, once the VM has more than 64 vCPUs, Windows creates another NUMA Node that we can see in Task Manager:

Windows creates an additional node (with empty local Memory allocation) in ALL CASES when CPU Hot-Add is enabled, even when the VM is not Wide.

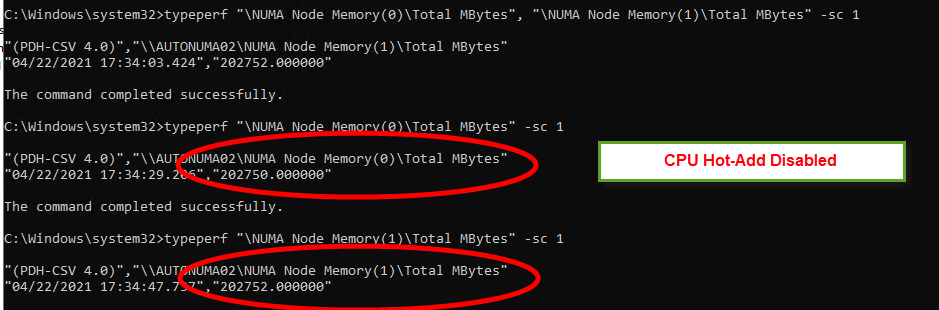

This behavior of creating Memory-less NUMA Nodes is only observed when CPU Hot-Add is enabled on the VM. The following is what we observe when CPU Hot-Add is disabled:

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.