By Uday Kurkure, Lan Vu, and Hari Sivaraman

VMware is announcing near bare or better than bare-metal performance for the machine learning training of natural language processing workload BERT with the SQuAD dataset and image segmentation workload Mask R-CNN with the COCO dataset. Earlier, VMware, with Dell, submitted its first machine learning benchmark results to MLCommons. The results—which show that high performance can be achieved on a VMware virtualized platform featuring NVIDIA GPU and AI technology—were accepted and published in the MLPerf 1.1 Inference category.

For training workloads, VMware focused on scaling up the number of NVIDIA GPUs connected by NVIDIA NVLink.

The testbed consisted of a VMware vSphere + NVIDIA AI-Ready Enterprise Platform, which included:

- VMware vSphere 7.0 U3c data center virtualization software

- NVIDIA AI Enterprise software

- 4x NVIDIA A100-40GB With NVIDIA NVLink

- Dell EMC PowerEdge XE8545 rack server

- 2x AMD EPYC 7543 processors with 128 logical cores

- Image Segmentation Mask R-CNN with COCO dataset from https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/Segmentation/MaskRCNN

- Language Modeling BERT with SQuAD dataset from https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/LanguageModeling/BERT

To learn of performance improvements, VMware scaled the number of GPUs from 1 to 2 to 4. These GPUs were linked with NVLinks, which provided high-speed, peer-to-peer (P2P) GPU-to-GPU communication.

The scaling of GPUs with NVLink delivers 1.18x training throughput for Mask R-CNN and up to 2.43x training throughput for BERT compared with no P2P communication.

To learn of any vGPU overhead, VMware benchmarked this solution against the same system with GPUs in passthrough mode. The performance results showed the virtualized system achieved up to 103% of the equivalent bare-metal performance with only 24 logical CPU cores and up to 4x NVIDIA vGPU A100-40c. Using the virtualized platform, you would still have 104 logical CPU cores available for additional demanding tasks in your data center. This solution displays extraordinary power by achieving near bare-metal performance while providing all the virtualization benefits of VMware vSphere: server consolidation, power savings, virtual machine over-commitment, vMotion, high availability, DRS, central management with vCenter, suspend/resume VMs, cloning, and more.

This blog discusses the following topics:

- VMware and NVIDIA AI Enterprise

- Architectural Feature of NVIDIA GPU Ampere A100: 3rd Generation NVLINK

- Scaling ML Training Performance in VMware vSphere with NVIDIA vGPUs Connected by NVLink

- ML Training Performance Results for Mask R-CNN with No GPU-to-GPU Communication and NVLink-enabled GPU-to-GPU Communication in vSphere

- ML Training Performance Results for BERT on SQuAD dataset with No GPU-to-GPU Communication and NVLink-enabled GPU-to-GPU Communication in vSphere

- Comparison of Scaling of ML Training with NVLinked vGPUs with Performance Results for Passthrough/Bare Metal GPUs

- Takeaways

VMware vSphere and NVIDIA AI Enterprise

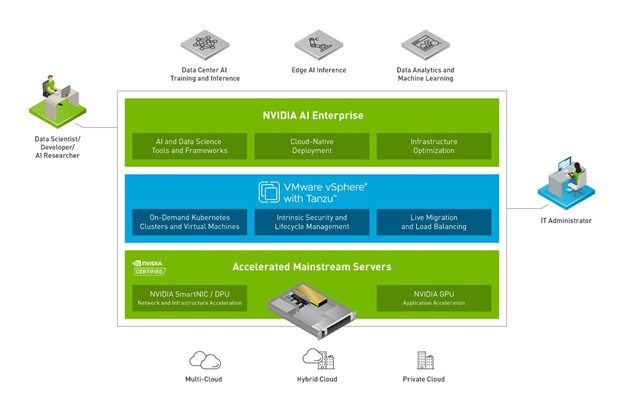

VMware and NVIDIA have partnered to unlock the power of AI for every business by delivering an end-to-end enterprise platform optimized for AI workloads. This integrated platform delivers best-in-class AI software: the NVIDIA AI Enterprise suite. It is optimized and exclusively certified by NVIDIA for the industry’s leading virtualization platform: VMware vSphere. The platform:

- Accelerates the speed at which developers can build AI and high-performance data analytics

- Enables organizations to scale modern workloads on the same VMware vSphere infrastructure in which they have already invested

- Delivers enterprise-class manageability, security, and availability

Furthermore, with VMware vSphere with Tanzu and VMware Cloud Foundation with Tanzu, enterprises can run containers alongside their existing VMs.

Figure 1. NVIDIA and VMware products working alongside each other

VMware has pioneered compute, storage, and network virtualization, reshaping yesterday’s bare-metal data centers into modern software-defined data centers (SDDC). Despite this platform availability, many machine learning workloads are still run on bare-metal systems. Deep learning workloads are so compute-intensive that they require compute accelerators like NVIDIA GPUs and software optimized for AI; however, many accelerators are not yet fully virtualized. Deploying unvirtualized accelerators makes such systems difficult to manage when deployed at scale in data centers. VMware’s collaboration with NVIDIA brings virtualized GPUs to data centers, allowing data center operators to leverage the many benefits of virtualization.

Architectural Features of NVIDIA GPU Ampere A100: 3rd Generation NVLink

VMware used virtualized 4x NVIDIA A100 Tensor Core GPUs fully connected by NVLink with NVIDIA AI Enterprise software in vSphere for the training workloads.

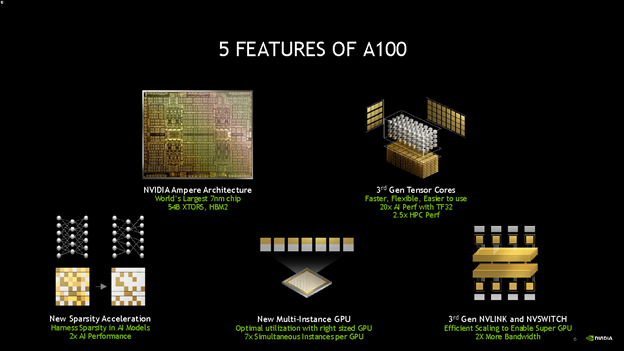

Figure 2. Five features of NVIDIA A100 GPUs

The NVIDIA Ampere architecture is designed to accelerate diverse cloud workloads, including high performance computing, deep learning training and inference, machine learning, data analytics, and graphics.

In this study, we’ll focus on 3rd-generation NVIDIA NVLink.

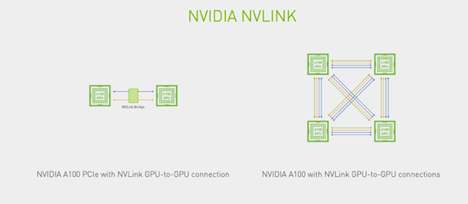

NVLink is a direct GPU-to-GPU interconnect. Figure 2 shows two PCIe-based NVIDIA A100 GPUs connected by an NVLink bridge and four A100-SXM GPUs fully connected by an NVLink. Note that each GPU in the 4-GPU configuration is bidirectionally linked to three other GPUs.

Figure 3. Two NVIDIA A100 PCIe GPUs connected by an NVLink bridge, and four NVIDIA A100 GPUs fully connected by NVLinks

Instead of a central hub, NVLink uses mesh networking to communicate directly to other GPUs; therefore, it offers higher throughput and lower latencies. Table 4 compares different generations of PCIe and NVLink. The 3rd Generation NVLink offers about 6.25 gigabytes per second payload rate per lane per direction, while PCIe 4.0 offers about 2.5 gigabytes per second payload rate per lane per direction.

| Interconnect | PCIe 4.0 | NVLink 3.0 |

| Effective payload rate per lane, per direction |

~2.5 GB/s | ~6.25 GB/s |

| Architecture | Volta, Ampere | Ampere |

Table 1. NVLink vs PCIe comparison

Scaling ML Training Performance in VMware vSphere with NVIDIA vGPUs Connected by NVLink

ML/AI workloads are becoming pervasive in today’s data centers and cover many domains. To show the flexibility of VMware vSphere virtualization in disparate environments, we chose to publish two of the most popular types of workloads: natural language processing, represented by BERT; and object detection, represented by Mask R-CNN. We obtained both workloads from the NVIDIA PyTorch GitHub repository. To find what virtualization overhead there was (if any), we ran each workload in vGPU and passthrough/bare-metal GPU environments.

| Area | Task | Model | Dataset | Batch Size |

| Vision | Object detection (large) | Mask R-CNN | COCO (1200×1200) | 4 |

| Language | Language processing | BERT | SQuAD v1.1 (max_seq_len=384) | 4 |

Table 2. The two different ML training workloads we used

We focused on scaling the number of GPUs from 1 to 2 to 4. We also conducted experiments with no GPU-to-GPU communication and NVLink-based GPU-to-GPU communication.

Hardware/Software Configurations

Table 3 describes the hardware configurations used for the bare-metal and virtual runs. The most salient difference in the configurations is that the virtual configuration used virtualized A100 GPUs, denoted by NVIDIA GRID A100-40c vGPU. Both the systems had the same four A100-SXM-40GB physical GPUs.

| Passthrough/Bare-Metal GPU Configuration | vGPU Configuration | |

| System | Dell EMC PowerEdge XE8545 | Dell EMC PowerEdge XE8545 |

| Processors | 2x AMD EPYC 8545 | 2x AMD EPYC 8545 |

| Logical Processor | 16 allocated to the VM | 16 allocated to the VM |

| GPU | 4x NVIDIA A100-SXM-40GB | 4x NVIDIA GRID A100-40c vGPU |

| Memory | 128 GB | 128 GB for the VM |

| Storage | 6.95 TB SSD | 6.95 TB SSD |

| OS | Ubuntu 20.04 VM in VMware vSphere 7.0.3c | Ubuntu 20.04 VM in VMware vSphere 7.0.3c |

| NVIDIA AIE VIB for ESXi | — | ESXi VIB 470.82.1 |

| NVIDIA Driver | 470.82.1 | 470.82.1 |

| CUDA | 11.3 | 11.3 |

| Container | Docker 20.10.12 | Docker 20.10.12 |

Table 3. Passthrough/bare-metal GPU vs. vGPU configurations

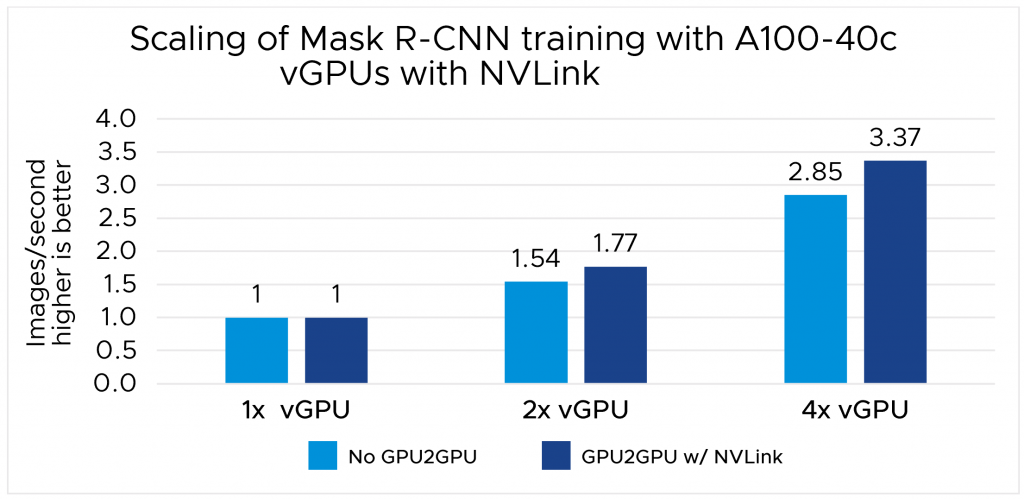

ML Training Performance Results for Mask R-CNN with No GPU-to-GPU Communication and NVLink-enabled GPU-to-GPU Communication in vSphere

Figure 4 compares the throughput (images processed per second) for the Mask R-CNN training workload using VMware vSphere 7.0 U3c with NVIDIA vGPUs with NVLink-enabled GPU-to-GPU communication against the configuration with no GPU-to-GPU communication. The no GPU-to-GPU baseline is set to 1.00, and the NVLink-based result is presented as a relative percentage of the baseline.

Figure 4 shows that VMware vSphere with NVIDIA vGPUs with NVLink delivers 1.15x throughput when two GPUs are linked by NVLink and 1.18x throughput when 4 GPUs are linked by NVLink.

Figure 4. Normalized throughput (images processed per second): NVIDIA vGPU with NVLink GPU-to-GPU vs no GPU-to-GPU communication

ML Training Performance Results for BERT on SQuAD dataset with No GPU-to-GPU Communication and NVLink-enabled GPU-to-GPU Communication in vSphere

Figure 5 compares throughput (queries processed per second) for the BERT on the SQuAD dataset training workload using VMware vSphere 7.0 U3c with NVIDIA vGPUs with NVLink-enabled GPU-to-GPU communication against the configuration with no GPU-to-GPU communication. The no GPU-to-GPU baseline is set to 1.00, and the NVLink-based result is presented as a relative percentage of the baseline.

Figure 5 shows that VMware vSphere with NVIDIA vGPUs with NVLink delivers 1.5x throughput when 2 GPUs are linked by NVLink and 2.43x throughput when 4 GPUs are linked by NVLink. Note that 2 vGPU connected by NVLink for GPU-to-GPU communication outperforms the 4-GPU configuration without GPU-to-GPU communication for the BERT training workload.

The performance improvement due to NVLink will depend on the inherent GPU-to-GPU communication required in the workloads. The training of BERT requires significant GPU-to-GPU communication compared to Mask R-CNN. Hence, BERT training showed 2.43x training throughput with NVLink, while Mask R-CNN showed 1.18x training throughput with NVLink. We used NVLink counters at the NVIDIA System Management Interface (nvidia-smi) to obtain the NVLink bandwidth usage.

Figure 5. Normalized throughput (queries processed per second): NVIDIA vGPU with NVLink GPU-to-GPU vs no GPU-to-GPU communication

Comparison of Scaling of ML Training with NVLinked vGPUs with Performance Results for Passthrough/Bare Metal GPUs

Figure 6 compares the throughput (queries processed per second) for the BERT with SQuAD workload using VMware vSphere 7.0 U3c with NVIDIA NVLinked vGPUs against the passthrough/bare metal NVLinked GPUs configuration. The bare metal baseline is set to 1.00, and the virtualized result is presented as a relative percentage of the baseline.

Figure 6 shows that VMware vSphere with NVIDIA vGPUs delivers near bare-metal performance or better than bare-metal performance ranging from 101% to 103% for the ML training of the BERT workload with the SQuAD dataset.

Figure 6. Normalized throughput (queries processed per second): NVIDIA vGPU vs bare metal/passthrough GPU

Takeaways

- The VMware/NVIDIA vGPU solution delivers near or better than the passthrough/bare-metal performance for ML training workloads.

- NVLink-based GPU-to-GPU communication enhances ML training performance significantly. Note that 4 GPUs connected by NVLink delivered 2.43x throughput for the training of BERT with the SQuAD dataset compared to no GPU-to-GPU communication.

- VMware achieved this performance with only 16 logical CPU cores out of 128 available CPU cores, thus leaving 104 logical CPU cores for other jobs in the data center. This is the extraordinary power of virtualization!

- VMware vSphere combines the power of NVIDIA AI Enterprise software, which includes NVIDIA’s vGPU technology, with the many data center management benefits of virtualization.

Acknowledgments

VMware thanks Vinay Bagade, Charlie Huang, Anne Hecht, Manvendar Rawat, and Raj Rao of NVIDIA for providing the software for VMware. The authors would like to acknowledge Juan Garcia-Rovetta and Tony Lin of VMware for the management support.

References

- NVIDIA Ampere Architecture

https://www.nvidia.com/en-us/data-center/ampere-architecture - NVIDIA NVLink and NVSwitch

https://www.nvidia.com/en-us/data-center/nvlink/ - NVIDIA Deep Learning Examples

https://github.com/NVIDIA/DeepLearningExamples - MLCommons

https://mlcommons.org/en/ - MLCommons v1.1 Results

https://mlcommons.org/en/inference-datacenter-11 - NVIDIA Ampere Architecture In-Depth

https://developer.nvidia.com/blog/nvidia-ampere-architecture-in-depth - NVIDIA Enterprise Documentation: Virtual GPU Types for Supported GPUs

https://docs.nvidia.com/ai-enterprise/latest/user-guide/index.html#supported-gpus-grid-vgpu - MIG or vGPU Mode for NVIDIA Ampere GPU: Which One Should I Use? (Part 1 of 3)

https://blogs.vmware.com/performance/2021/09/mig-or-vgpu-part1.html - NVLink Wikipedia

https://en.wikipedia.org/wiki/NVLink

Introduction to MLPerf Inference v1.1 with Dell EMC Servers

https://infohub.delltechnologies.com/p/introduction-to-mlperf-tm-inference-v1-1-with-dell-emc-servers - MLPerf Inference Virtualization in VMware vSphere Using NVIDIA vGPUs

https://blogs.vmware.com/performance/2020/12/mlperf-inference-virtualization-in-vmware-vsphere-using-nvidia-vgpus.html - NVIDIA TensorRT

https://developer.nvidia.com/tensorrt - NVIDIA Triton Inference Server

https://developer.nvidia.com/nvidia-triton-inference-server - J. Reddiet al., “MLPerf Inference Benchmark,” 2020 ACM/IEEE 47th Annual International Symposium on Computer Architecture (ISCA), Valencia, Spain, 2020, pp. 446-459, doi: 10.1109/ISCA45697.2020.00045.

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.