In today’s fast-paced digital landscape, IT organizations are caught between two worlds: rapidly adopting and scaling modern infrastructure while maintaining visibility, security and control.

VMware Cloud Foundation (VCF) Networking evolved significantly in VCF 9.0 with a simpler network deployment model with VPCs, self-service access to network services, and strong integration across the VCF stack. VCF 9.1 will build on these capabilities, delivering enhanced interoperability with the underlying physical fabric, flexible connectivity options, enhanced network services, greater support for VMware vSphere Kubernetes Service (VKS), and deeper flow analytics, VPC planning, and network diagnostics.

A Unified Network Fabric for the Private Cloud

EVPN-VXLAN interoperability with the network fabric

Traditional networking often involves deploying bespoke connectivity and network protocols to support VMs, containers, and physical workloads. This creates inconsistent policies and deployment models, indeterministic network performance, and a lack of end-to-end visibility and troubleshooting across these environments.

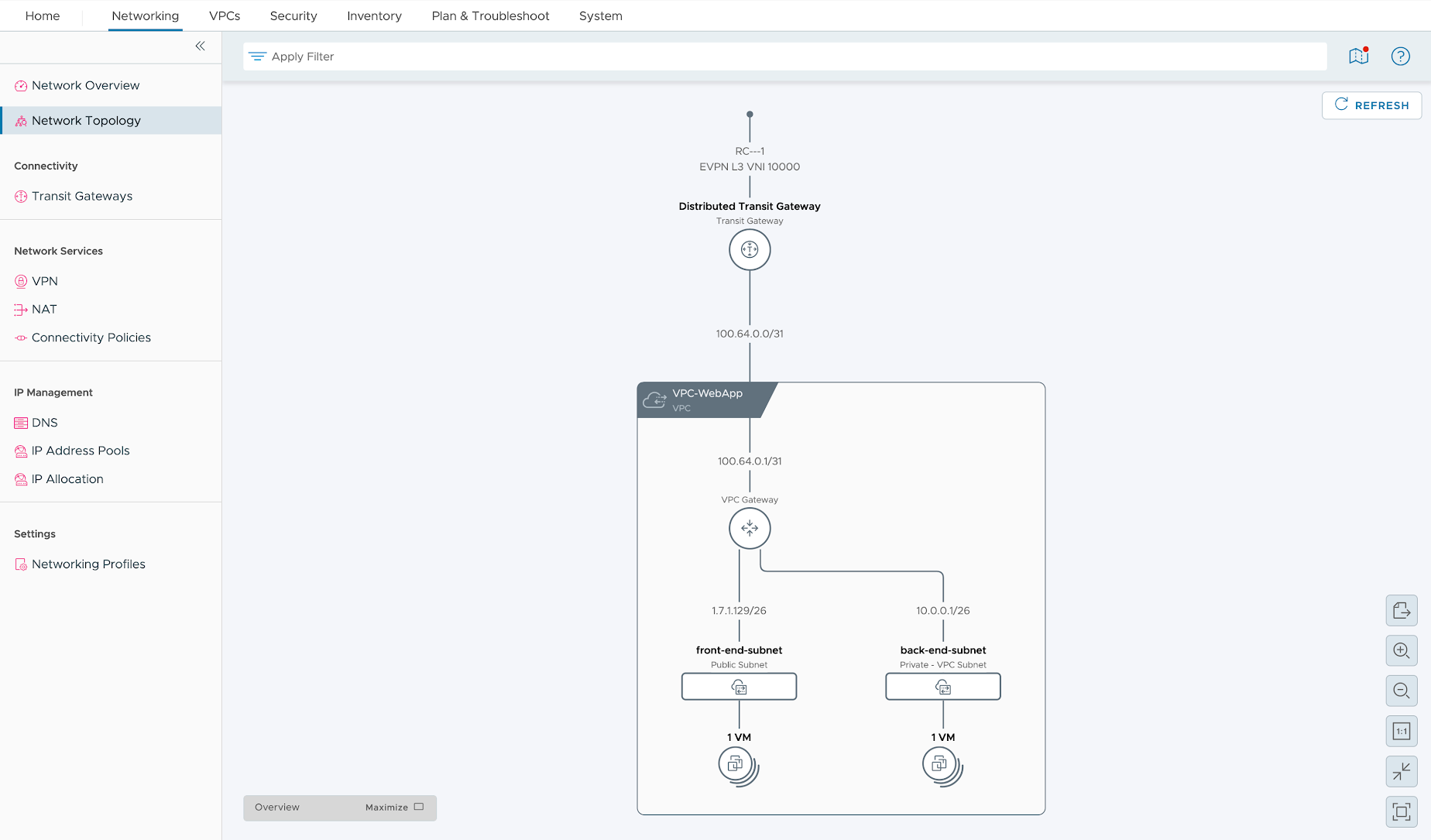

With VCF 9.1, we’ll introduce EVPN-VXLAN based interoperability with the network fabric which will further simplify how VPCs and VCF workloads connect to the physical infrastructure.

This standards-based interoperability between VCF and leading physical switch fabrics including Arista UCN, Cisco Nexus ONE, and SONiC, will allow networking teams to use consistent network connectivity and protocols across the entire network fabric (and down to the ESX host) and provide VCF admins a pre-configured external connection to connect their VPCs and workloads.

- For networking teams, this means achieving consistent topology and configuration across the entire private cloud network which also simplifies monitoring and troubleshooting.

- For VCF admins and virtualization teams, this means being able to deploy and connect their workloads and VPCs without needing deep expertise in networking or managing virtual appliances.

Furthermore, distributed connectivity from ESX hosts directly to the physical fabric will also eliminate the need for dedicated network edge nodes, cut capital costs, and address scaling bottlenecks associated with centralized connectivity.

Read the blog to learn more about the Broadcom-Arista Networks collaboration.

Flexible Connectivity for Complex Routing Topologies

VCF 9.1 will make several enhancements to how VPCs and Transit Gateways are deployed and managed. VCF 9.1 will enable new flexible connectivity options for tenants including multiple external connections, and multiple Transit Gateways with distributed VLAN connections per tenant with isolated VPN, static routes, and custom NAT configurations. This will deliver flexible multi-site routing with precise traffic management without needing external routing equipment. VCF 9.1 will also enable administrators to define the span of VPCs directly from vCenter or NSX. The user can restrict VPCs to specific clusters or configure them to across all vCenters in the networking domain when required.

Enhanced DirectPath I/O and Uniform Passthrough for NVIDIA Accelerated NICs

VCF 9.1 will deliver high throughput, high packet rate, low latency networking by providing virtual machines with direct hardware access to NVIDIA Connect-X and BlueField adapters.

Enhanced DirectPath I/O (EDPIO) for NVIDIA ConnectX-6 DX, Connect-7 or BlueField-3 (NIC mode) is specifically optimized for AI, machine learning, and distributed computing, and will offer support for GPUDirect RDMA to facilitate high-speed, direct communication between GPUs across the network. This will maximize hardware-level performance and offer advanced RDMA capabilities for compute-intensive applications.

Uniform Passthrough (UPT) for NVIDIA ConnectX-7, BlueField-2, and BlueField-3 adapters (NIC mode) is optimized for non-AI high-performance workloads, and will deliver hardware-level acceleration by leveraging VMXNET3 Hardware Emulation. It will provide exceptional speeds and efficiency without requiring customers to manage guest OS drivers.

Crucially, both EDPIO and UPT maintain full compatibility with essential cluster features—including vMotion, High Availability (HA), and Distributed Resource Scheduler (DRS)—ensuring you do not have to compromise between network performance and workload mobility.

VPC services / Enhanced Workload Connectivity and Services

VPC Policy-based Connectivity

Scaling a private cloud shouldn’t mean reconfiguring firewalls every time a new department needs a network. VCF 9.1 will streamline network connectivity and isolation using VPC Policy-based Connectivity. Admins will be able to use different types of Communities to enforce granular VPC isolation and deliver shared services without complex network changes:

- Regular Communities: VPCs within a Community can communicate; those outside cannot

- Isolated Communities: Strict “no-communication” zones for VPCs with sensitive workloads

- Shared Communities: Designed for universal services like DNS that need to be reachable from all VPCs

These capabilities will be available through the VMware Advanced Cyber Compliance add-on. Read the blog for more details.

IP Address Management (IPAM) for Cloud Consumption

VCF 9.1 will include enhanced IPAM allocation, usage and visibility. VCF administrators will be able to consume these capabilities within though their console of choice – VCF Automation, vCenter, and NSX. The VCF 9.1 implementation will allow one IP Block to support up to 10 CIDRs, 10 IP ranges, and to exclude specific Ips, minimizing any disruption to the existing VPC consumers. Refer to the VCF 9.1 release notes for more details.

Furthermore, new integration with Infoblox will provide a “single source of truth” for IPAM and DNS, preventing IP conflicts automatically across your entire environment. This native support for Infoblox IPAM for VPC deployments in VCF 9.1 will allow the user to:

- Discover Infoblox Network Views, DNS Views and Network Containers

- Use Infoblox Network Containers to create Subnets and update VM IP/FQDN information

- Configure the IPAM integration in VCF Automation (IP block definition) or NSX (Infoblox pairing, IP block definition)

Enhanced VPC Network Services

The Distributed Transit Gateway (DTGW) connectivity model for VCF workloads offers simplicity by eliminating the need for NSX edge nodes. However, customers utilizing the DTGW connectivity model were previously limited to basic services such as DHCP and external NAT. VCF 9.1 will make stateful network services available for DTGW deployments through the Virtual Network Appliance (VNA) cluster. In addition to DHCP and external NAT, this cluster will enable stateful services—including various NAT options and load balancing—without complicating the distributed architecture or network configuration.

For customers utilizing the Centralized Transit Gateway design using edge nodes to provide newtork services, VCF 9.1 will provide improvements for network throughput by supporting active-active Tier-0 gateway configuration for up to eight edge nodes. A new VPN service will also be available within the transit gateway to provide secure and flexible connectivity.

Native Networking for VMware vSphere Kubernetes Service (VKS)

High-performance Networking for VKS Clusters

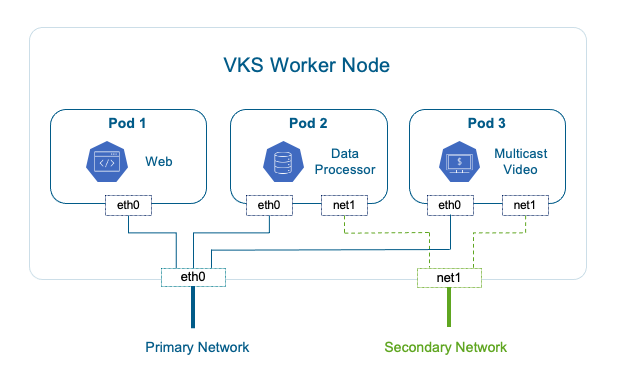

As containerized workloads grow, so does the need for enterprise-grade networking within vSphere Kubernetes Service (VKS). With VCF 9.1, you will be able to deploy Kubernetes clusters in VKS with a secondary network interface. This capability will enable any VKS cluster, as well as pods within, to be deployed with a secondary network interface (vNIC) using the VMware Antrea CNI. Both VKS Cluster nodes and pods’ secondary NICs will then be mapped to VLANs or network subnets in the VCF Virtual Private Cloud (VPC).

This is critical for container-based applications that need to separate different types of traffic such as isolating traffic from primary networks, isolating unicast traffic from multicast traffic to/from container workloads, or direct access to physical systems such as high-performance NFS storage.

Support for Istio Service Mesh

Large-scale environments in banking and other regulated sectors require the security offered by a service mesh. VCF 9.1 will embed lightweight Istio Service Mesh natively, providing mTLS security and traffic management without requiring changes to your application code. Istio Service Mesh will be made available at a per-VKS cluster level for workloads running on vSphere Supervisor. Key functionalities will include:

- mTLS between containers within a VKS cluster

- Sidecar and Istio Ambient (Sidecar-less) mode

- Istio Security and Traffic Management APIs

- Layer 4 and Layer 7 routing

Enhanced Visibility, Planning, and Diagnostics

Flow Analytics and Path Topology

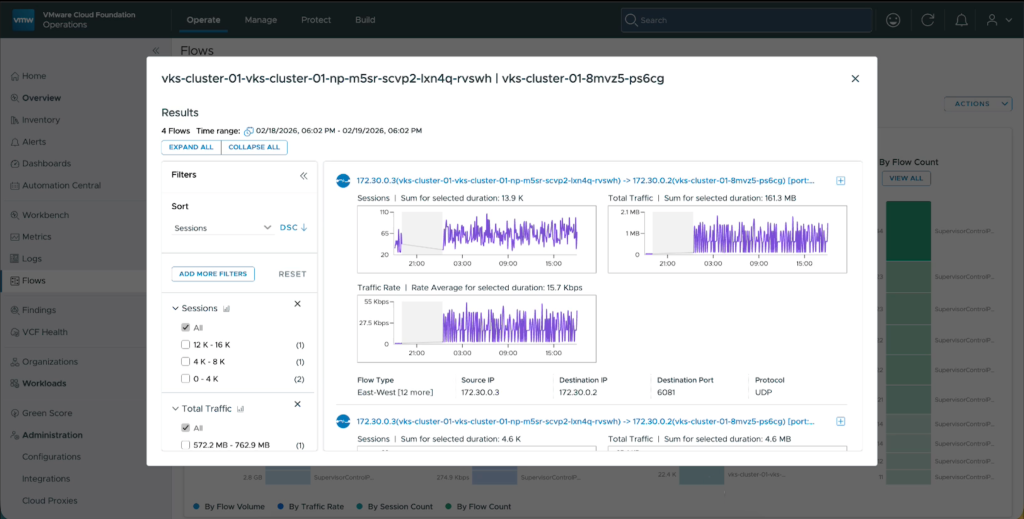

VCF 9.1 will deliver IPFIX support on vSphere Distributed Switch (VDS) and Antrea CNI, enabling granular flow visibility across VMs and VKS pods.

This will help VCF administrators understand the traffic patterns and top talkers and correlate container traffic with VM traffic directly in the VCF Operations console. It will also provide traffic path visibility for troubleshooting performance issues.

Network Assessment and VPC Planning

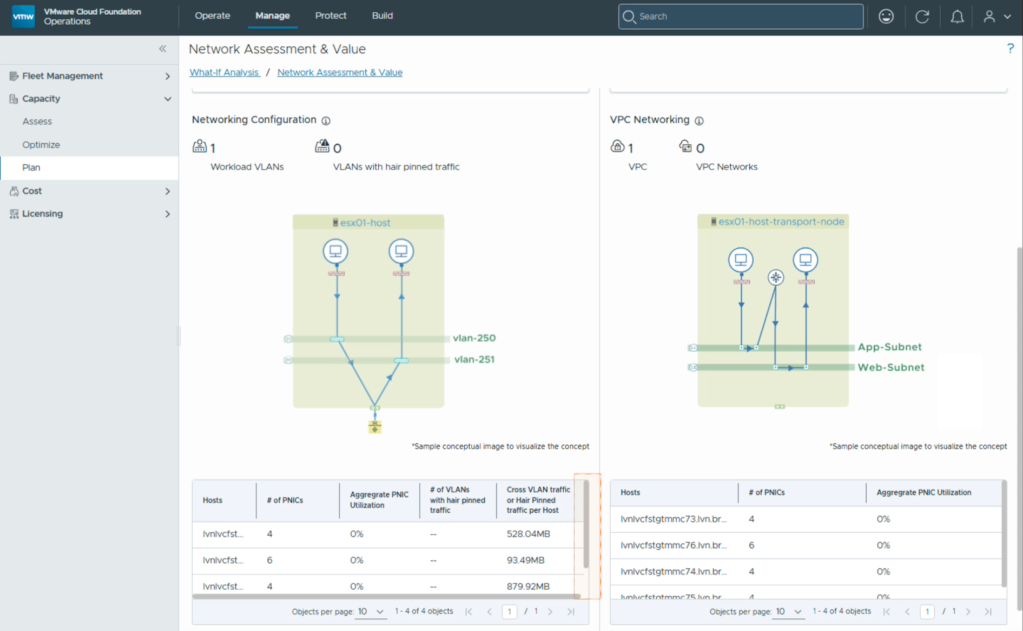

Thinking of moving from traditional vSphere networking to VPCs? VCF 9.1 will include a Network Assessment and Value report that evaluates your current traffic and provides a guided transition plan.

Key capabilities will include:

- Assessment and Value: Assess existing network architecture and configuration including vSphere Distributed Switch (VDS) and vSphere clusters to evaluate potential improvements in server consolidation, workload mobility, and traffic hair-pinning through the physical infrastructure. This will allow VCF users to monitor east-west and north-south traffic flows without challenging and time-consuming manual processes and optimize infrastructure costs.

- VPC Planning and Adoption: Design and plan the transition from vSphere networking (VDS) to VPC based networking. VPC planning in VCF 9.1 will analyze your existing network infrastructure including VLANs, network traffic patterns, and reachability and provides the users relevant networking parameters required to create VPCs. This will help drive server consolidation and workload mobility, logical workload isolation, and self-service for developers.

Network Diagnostics and Findings

VCF 9.1 will introduce an overall health dashboard that provides infrastructure visibility into critical issues impacting virtual network appliances and capabilities. This will include out of the box dashboards for network inventory, capacity monitoring, and detailed metrics for the NSX Edge appliance and ESX host networking. Users will be run integrated ODS runbooks and triage issues quickly. A troubleshooting workbench will provide VCF wide alerting, metrics, and logging mechanism that centralizes networking alerts, metrics and logs.

Conclusion: Efficiency at Scale

VCF 9.1 is more than just a collection of features – it’s a strategic shift toward a self-service, automated private cloud that is deployable at scale. With tighter integration with the physical fabric, flexible connectivity options and network services, native networking support for VKS container workloads, and enhanced planning and diagnostics, organizations can finally bridge the skills gap, lower their TCO, and get applications to market faster than ever before.

Ready to simplify your network? Check out the full VCF 9.1 release notes and other resources linked below to see how these features can transform your private cloud.

Learn More

- Press Release: Broadcom announces VMware Cloud Foundation 9.1

- Datasheet: VCF Networking

- VCF Networking Overview: VMware Cloud Foundation Networking webpage

- VCF 9.1 Blog: VCF 9.1 Blog Series

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.