By Jonathan McDonald

By Jonathan McDonald

Jonathan McDonald is a Technical Solutions Architect for the Professional Services Engineering team. He currently specializes in developing architecture designs for core Virtualization, and Software-Defined Storage, as well as providing best practices for upgrading and health checks for vSphere environments.

This is part one of a two blog series as there was just too much detail for a single blog. I want to start off by saying that all of the details here are based on my own personal experiences. It is not meant to be a comprehensive guide for setting up stretch clustering for Virtual SAN, but a set of pointers to show the type of detail that is most commonly asked for. Hopefully it will help prepare you for any projects that you are working on.

Most recently in my day-to-day work I was asked to travel to a customer site to help with a Virtual SAN implementation. It was not until I got on site that I was told that the idea for the design was to use the new stretch clustering functionality that VMware added to the Virtual SAN 6.1 release. This functionality has been discussed by other folks in their blogs, so I will not reiterate much of the detail from them here. In addition, the implementation is very thoroughly documented by the amazing Cormac Hogan in the Stretched Cluster Deployment Guide.

What this blog is meant to be is a guide to some of the most important design decisions that need to be made. I will focus on the most recent project I was part of; however, the design decisions are pretty universal. I hope that the detail will help people avoid issues such as the ones we ran into while implementing the solution.

Background

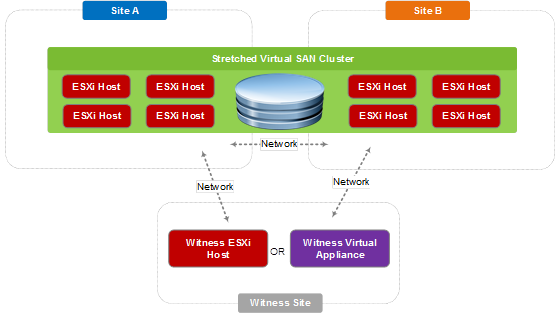

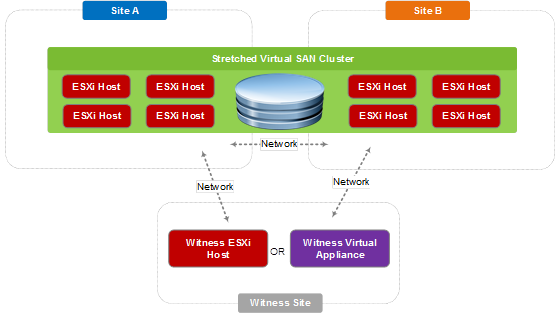

For anyone not aware of stretch clustering functionality, I wanted to provide a brief overview. Most of the details you already know about Virtual SAN still remain true. What it really amounts to is a configuration that allows two sites of hosts connected with a low latency link to participate in a virtual SAN cluster, together with an ESXi host or witness appliance that exists at a third site. This cluster is an active/active configuration that provides a new level of redundancy, such that if one of the two sites has a failure, the other site will immediately be able to recover virtual machines at the failed site using VMware High Availability.

The configuration looks like this:

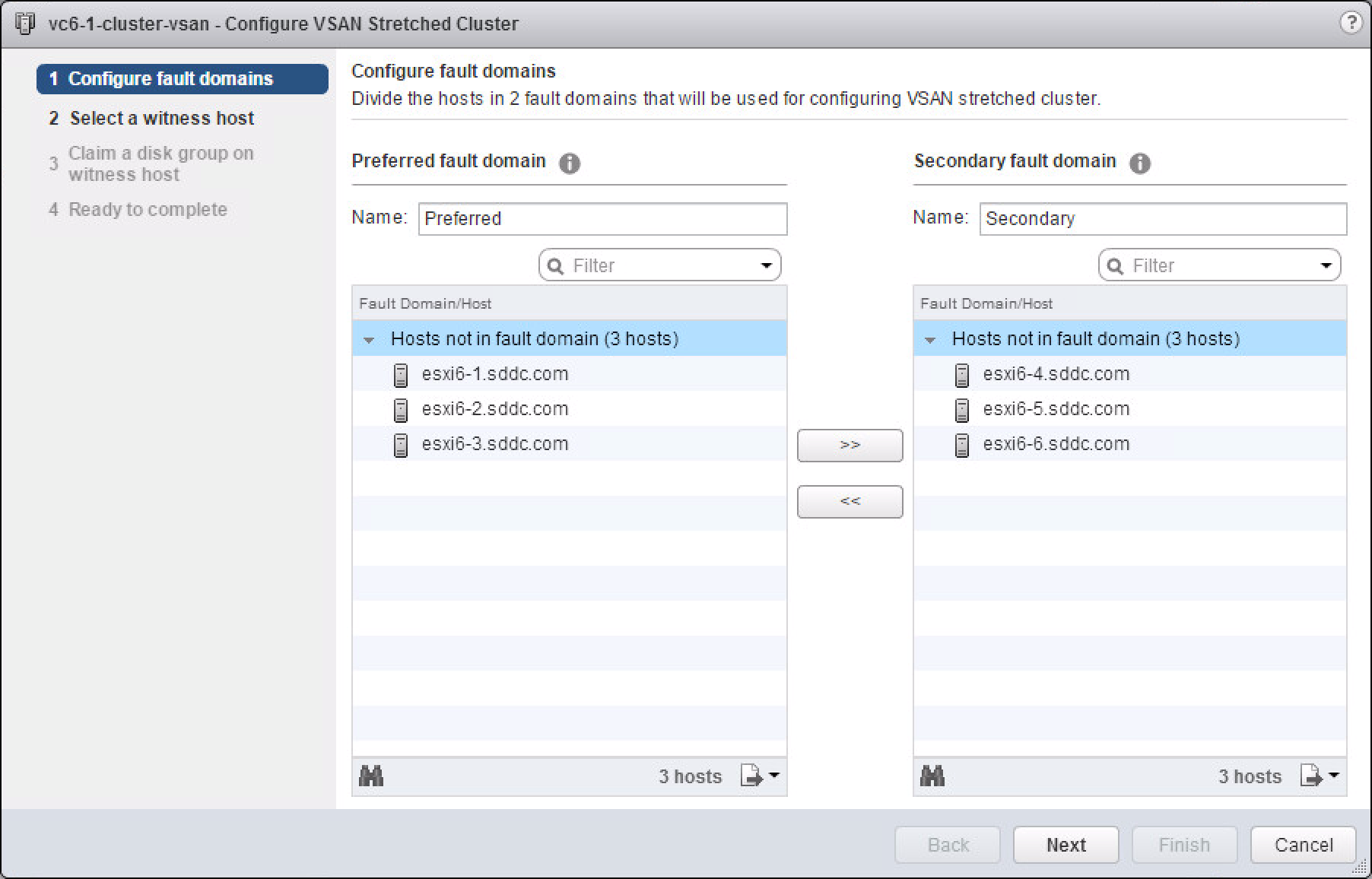

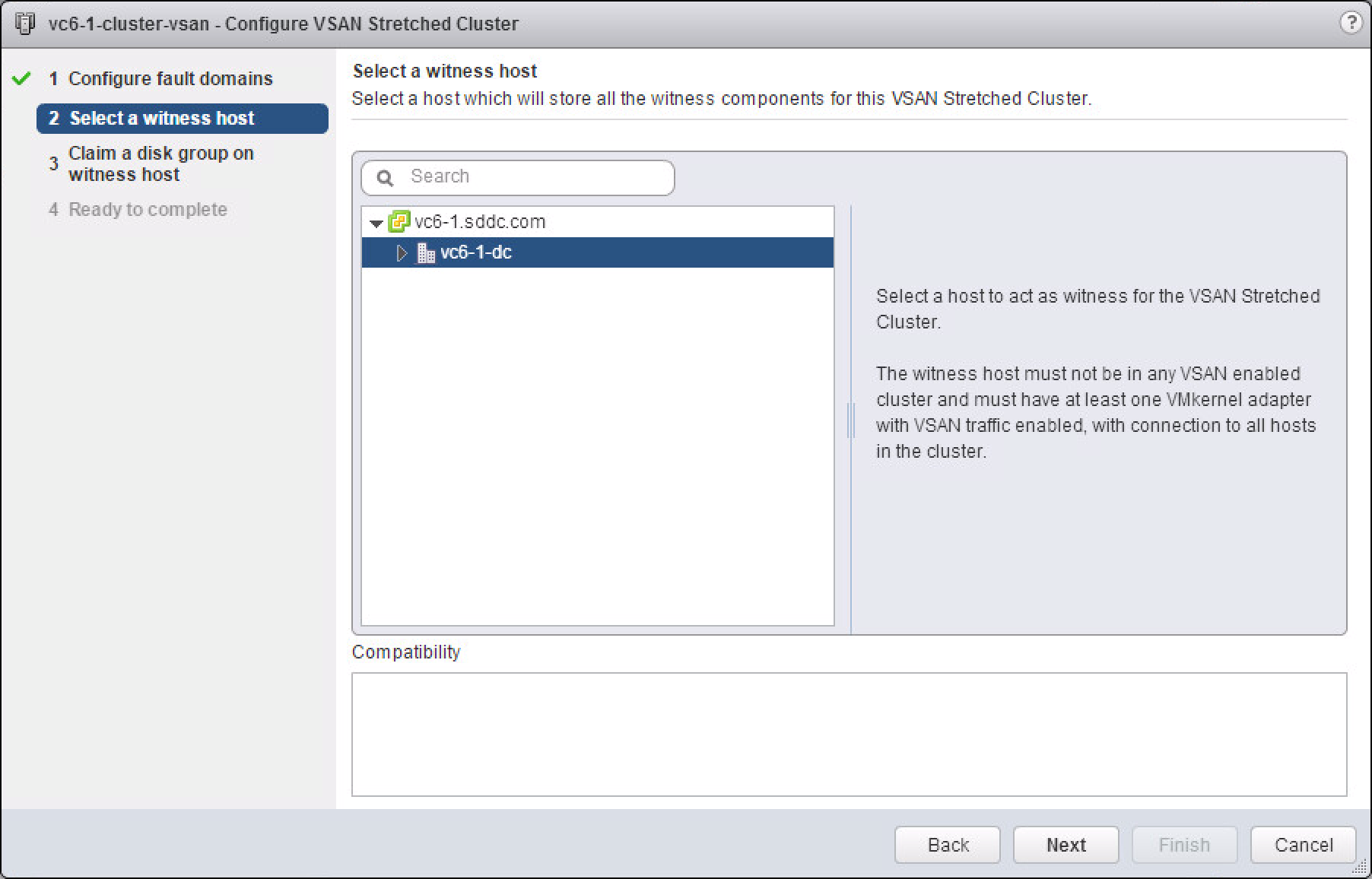

This is accomplished by using fault domains and is configured directly from the fault domain configuration page for the cluster:

Each site is its own fault domain which is why the witness is required. The witness functions as the third fault domain and is used to host the witness components for the virtual machines in both sites. In Virtual SAN Stretched Clusters, there is only one witness host in any configuration.

For deployments that manage multiple stretched clusters, each cluster must have its own unique witness host.

The nomenclature used to describe a Virtual SAN Stretched Cluster configuration is X+Y+Z, where X is the number of ESXi hosts at data site A, Y is the number of ESXi hosts at data site B, and Z is the number of witness hosts at site C.

Finally, with stretch clustering, the current maximum configuration is 31 nodes (15 + 15 + 1 = 31 nodes). The minimum supported configuration is 1 + 1 + 1 = 3 nodes. This can be configured as a two-host virtual SAN cluster, with the witness appliance as the third node.

With all these considerations, let’s take a look at a few of the design decisions and issues we ran into.

Hosts, Sites and Disk Group Sizing

The first question that came up—as it almost always does—is about sizing. This customer initially used the Virtual SAN TCO Calculator for sizing and the hardware was already delivered. Sounds simple, right? Well perhaps, but it does get more complex when talking about a stretch cluster. The questions that came up regarded the number of hosts per site, as well as how the disk groups should be configured.

Starting off with the hosts, one of the big things discussed was the possibility of having more hosts in the primary site than in the secondary. For stretch clusters, an identical number of hosts in each site is a requirement. This makes it a lot easier from a decision standpoint, and when you look closer the reason becomes obvious: with a stretched cluster, you have the ability to fail over an entire site. Therefore, it is logical to have identical host footprints.

With disk groups, however, the decision point is a little more complex. Normally, my recommendation here is to keep everything uniform. Thus, if you have 2 solid state disks and 10 magnetic disks, you would configure 2 disk groups with 5 disks each. This prevents unbalanced utilization of any one component type, regardless of whether it is a disk, disk group, host, network port, etc. To be honest, it also greatly simplifies much of the design, as each host/disk group can expect an equal amount of love from vSphere DRS.

In this configuration, though, it was not so clear because one additional disk was available, so the division of disks cannot be equal. After some debate, we decided to keep one disk as a “hot spare,” so there was an equal number of disk groups—and disks per disk group—on all hosts. This turned out to be a good thing; see the next section for details.

In the end, much of this is the standard approach to Virtual SAN configuration, so other than site sizing, there was nothing really unexpected.

Booting ESXi from SD or USB

I don’t want to get too in-depth on this, but briefly, when you boot an ESXi 6.0 host from a USB device or SD card, Virtual SAN trace logs are written to RAMdisk, and the logs are not persistent. This actually serves to preserve the life of the device as the amount of data being written can be substantial. When running in this configuration these logs are automatically offloaded to persistent media during shutdown or system crash (PANIC). If you have more than 512 GB of RAM in the hosts, you are unlikely to have enough space to store this volume of data because these devices are not generally this large. Therefore, logs, Virtual SAN trace logs, or core dumps may be lost or corrupted because of insufficient space, and the ability to troubleshoot failures will be greatly limited.

So, in these cases it is recommended to configure a drive for the core dump and scratch partitions. This is also the only supported method for handling Virtual SAN traces when booting an ESXi from a USB stick or SD card.

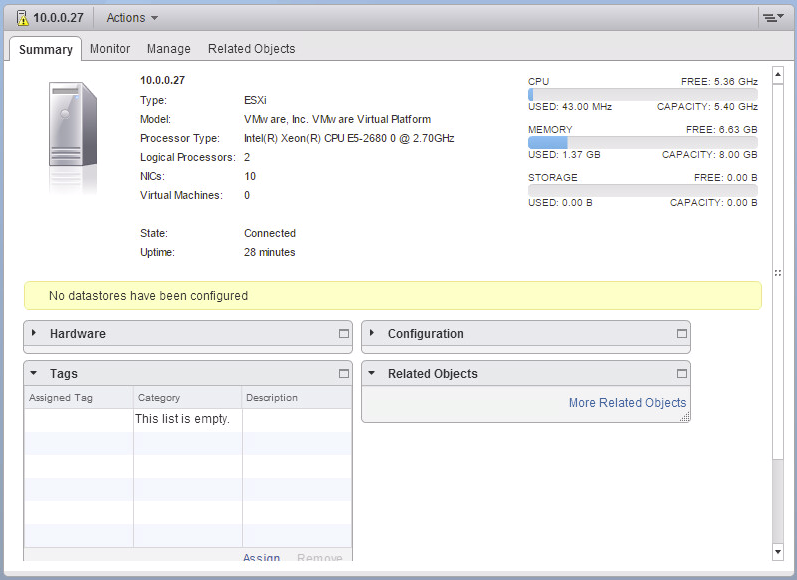

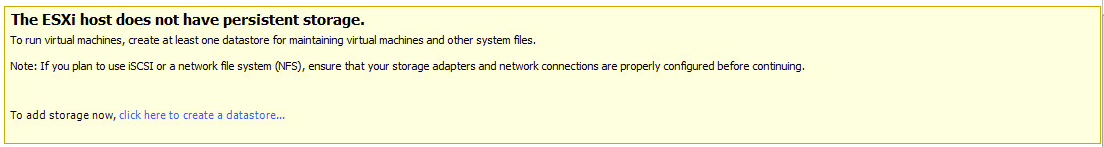

That being said, when we were in the process of configuring the hosts in this environment, we saw the “No datastores have been configured” warning message pop up – meaning persistent storage had not been configured. This triggered the whole discussion; the error is similar to the one in the vSphere Web Client.

In the vSphere Client, this error also comes up when you click to the Configuration tab:

In the vSphere Client, this error also comes up when you click to the Configuration tab:

The spare disk turned out to be useful because we were able to use it to configure the ESXi scratch dump and core dump partitions. This is not to say we were seeing crashes, or even expected to; in fact, we saw no unexpected behavior in the environment up to this point. Rather, since this was a new environment, we wanted to ensure we’d have the ability to quickly diagnose any issue, and having this configured up-front saves significant time in support. This is of course speaking from first-hand experience.

In addition, syslog was set up to export logs to an external source at this time. Whether using the syslog service that is included with vSphere, or vRealize Log Insight (amazing tool if you have not used it), we were sure to have the environment set up to quickly identify the source of any problem that might arise.

For more details on this, see the following KB articles for instructions:

- Storing ESXi coredump and scratch partitions in Virtual SAN

- Creating a persistent scratch location for ESXi 4.x/5.x/6.0

I guess the lesson here is that when you are designing your virtual SAN cluster, make sure you remember that having persistence available for logs, traces and core dumps is a best practice. If you have a large memory configuration, this is the easiest way to install ESXi and the scratch/core dump partitions to a hard drive. This also simplifies post-installation tasks, and will ensure you can collect all the information support might require to diagnose issues.

Witness Host Placement

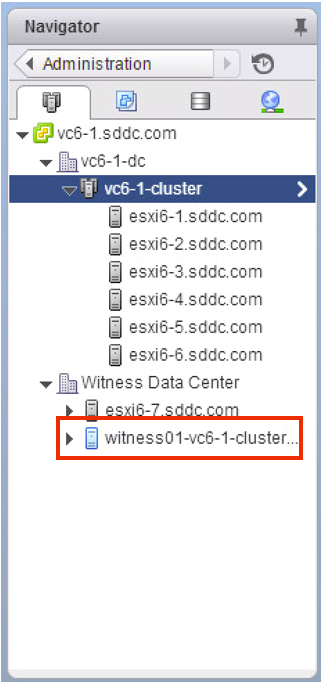

The witness host was the next piece we designed. Officially, the witness must be in a distinct third site in order to properly detect failures. It can either be a full host or a virtual appliance residing outside of the virtual SAN cluster. The cool thing is that if you use an appliance, it actually appears differently in the Web client:

For the witness host in this case, we decided to use the witness appliance rather than a full host. This way, it could be migrated easily because the networking was not set up to the third site yet. As a result, for the initial implementation while I was onsite, the witness was local to one of the sites, and would be migrated as soon as the networking was set up. This is definitely not a recommended configuration, but for testing—or for a non-production proof-of-concept—it does work. Keep in mind, that a site failure may not be properly detected unless the cluster is properly configured.

With this, I conclude Part 1 of this blog series; hopefully, you have found this useful. Stay tuned for Part 2!

This blog post originally appeared on the VMware Consulting Blog.