We, in our everyday lives, both individually and as a society, want to know how well we are doing. We track our financial accounts, health monitors, follow inflation and market rates, track systems utilization, return on investments, and so on, to help make decisions about what actions to take. Hence, we call them Key Performance Indicators. This is where the value of these performance indicators lay. They help guide us to take action. Choosing the right ones, based on reliable sources, is key to their value.

This blog is the first of a three-part series in which we will cover:

- Background of KPIs, Metrics, Indexes, etc. that are part of our everyday lives

- Definitions of KPIs, Metrics to clarify some misunderstandings and show how they relate

- Need to tier, stratify, organize KPIs based on their audiences, stakeholders and required actions

- Best practices around defining and organizing KPIs and their metrics ensuring performance, financial and partner reporting

The subsequent blogs will cover:

- Approach to defining and implementing a KPI service

- Challenges (there always are) around collecting the data needed to implement KPIs and dashboards

- Relevant examples of KPIs used for tracking customer success

- Tangible and intangible KPIs, metrics and sources

- Product / Service-specific examples

- Sample dashboard designs

Background

In general, metrics and KPIs let us know how well we are doing.

For example, we are concerned about miles per gallon for our cars, how well are our investments and retirement accounts are progressing, etc. Our governments report to us in terms of gross national products (GNP) and stock markets’ indexes, which inform us of the economy’s health. But, in relating it to our customers’ needs, they want to know how well a project is going, how to measure its progress, and its implementation value.

To answer these questions, we need data. It is all about the data. Ideally, these data are generated and collected automatically to avoid intense menial tasks subject to errors as well as categorized to make analyses more meaningful.

The point in which data becomes information is when it is analyzed to become useful in determining what is going on and what actions to take.

Definitions

But first, let’s define some terms.

Simply put, metrics are the numbers of things and usually represented by “#” signs. Ideally, we collect these metrics in an automated way from systems, monitors, validated data entry, etc. Some examples from our digital world might be the number of virtual desktop users, the number of virtual machines, etc.

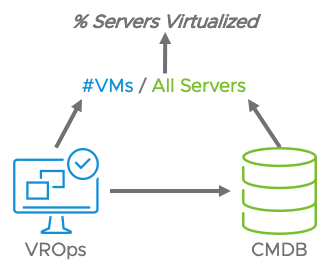

Key Performance Indicators (KPIs) are the averages or percentages of things. “%” signs usually represent them. They are calculated from a combination of metrics that can be from multiple systems. Continuing along with the above metrics examples, the KPI counterparts would be the % of users using virtual desktops, the % of servers that are virtualized.

Note that in the two KPI examples, to determine those percentages, we need data sources other than the VDI or VMware systems as these systems only tell us half the story. The other half would come from enterprise systems, such as a CMDB reporting on the totality of desktops, users, servers, and so on.

One quasi exception I would like to mention here is the value we use as a metric but is represented as a KPI – % CPU time. At first, it appears to be a KPI because it is represented by a % sign. As it stands, the % CPU time when captured is actually an average over very granular events of microseconds. But, we use it as a metric at the summary level of minutes.

Tiering

KPIs, especially when reported on through dashboards, should be organized and tiered according to the relevancy of their audience. In the business world, we have the hierarchy of executives, management, and line managers as well as each group’s partner and vendor counterparts. It is a best practice to categorize the KPIs in the same structure.

|

|

|

|

|

|

We recommend providing insight to your vendor and partner support teams as these metrics and KPIs will help them in providing proactive support and product improvement.

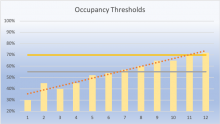

In this example, we have service management processes and functions. At the operational level, the concern is over the health of the specific process. Abstracting the data up a level to the Managers, we have broader KPIs which are evaluated over periods to indicate trends. These trends indicate a direction of behavior that may need to have actions to change those directions. For example, a decrease in customer satisfaction may be due to projects and provisioning taking longer. An action of adding resources to provisioning and development could reverse that trend. Executives need to evaluate status quickly. Usually, positive indicators require no further questions. However, as things turn from green to yellow, they need to be able to drill down into the tiers of KPIs to understand why those higher-level KPIs are the way they are.

Best Practices

Over the years, we at VMware have learned some lessons in generating KPIs and dashboards. Among the top ones are to:

- Keep the KPIs actionable. KPIs should provide insight into what type of remediation is needed. We should never get a “so what” reaction to a KPI. An actionable example might be to add more capacity in the case of a KPI showing threshold utilization. Another, as cited before, might be a reduction in customer satisfaction requiring more resources and tighter SLA compliance. We also recommend that at least one financial related KPI, such as % Savings, be included to validate the business case and management expectations.

- Ensure that the KPIs, at the management level, are trended over time. They tell the story. Showing KPIs over time indicate progress and direction. The time periods should also be meaningful. In some industries, the previous week or quarter is not as meaningful as this time last year. The retail industry is especially concerned about the same-store sales by parallel season.

- Keep the KPIs like to like, apples to apples. Comparisons should always be on a common unit level, such as average cost per user or average cost per typical VM. Examples of not doing so are plentiful and can be, and often is, used to be misleading. One example that comes to mind was that of a major bank where the cost of IT was rising year over year by 10%. Executive management, in an effort to contain costs and justify eliminating IT, outsourced the entire infrastructure in exchange for a guaranteed, predictable charge. What they did not realize was that IT costs were growing linearly but that the number of transactions was growing exponentially. In essence, the cost per transaction reduced dramatically. In the outsourced relationship, that growth was incremental to the contract, and the result was overall higher costs. If the comparisons had been made on the transaction costs to start with, the bad outsourcing deal would have been avoided.

- Know your audience. Make sure that their questions and concerns are being answered. If there is a major project taking place, such as data center consolidations, make sure that there are KPIs relating to its progress. Study the backlog of requests for additional, ad hoc reports to determine whether some of these should become part of the standard set. Include your partners’ and vendors’ interests to enable quality support.

- Make sure your audience gets their concerns answered upfront. A user should not have to page down multiple screens or pages to find what they need. It is part of knowing your audience.

- Always allow for drill down. It is a fact that most reports generate more questions than they answer. In having a drill-down capability, those questions can be answered on the spot.

- Lifecycle the KPIs. Nothing is forever. Even the need for certain KPIs come and go. Some KPIs may be needed for the current culture or issues. Periodic reviews should take place to determine relevancy. For example, a major data center relocation project may have the executive level of attention, and the dashboards should have that effort’s KPIs upfront. Once completed, they are no longer necessary.

- Build a dictionary. All KPIs should be defined, sources identified, and transformation algorithms detailed. Most metrics data need to be associated with metadata detailing for whom, where, and what that metric is about. There are data extraction and loading processes linking those elements and rationalizing them across multiple sources. These processes and algorithms must be documented and available in a glossary so that the user or auditor can be informed of the sources and transformations the KPIs represent. A simple example here is where a % Availability KPI showed 100%. But developers were complaining about access. Turns out that the % Availability KPI was for production systems only. That one got renamed to % Production Availability.

Summary

In this first installment of our blog series, we discussed the importance and relevance of KPIs in our lives and work. They tell us how well we are doing and help guide decisions. They should be meaningful, actionable, calculated from operational metrics, and tiered according to the level of concern of your audience, partners, and vendors. Key to their success is in the accuracy of the collected data and the levels of comparisons. VMware Professional Services can help you understand how to incorporate KPIs and Metrics in your existing processes. Contact your VMware representative to learn more.

About the Author

Norman Dee is a Staff Architect covering transformation enablement services within the IT and digital workspaces. He is a specialist in Operating Model, Cloud Management, IT Financial Management/Business Case and Capacity Planning. In his role, he helps enterprises fully understand the technical and financial benefits of operating model and infrastructure transformations. His career has spanned roles from directing worldwide projects to CTO/CIO, across multiple industries including financial services, media, publishing and online retail.

Norman Dee is a Staff Architect covering transformation enablement services within the IT and digital workspaces. He is a specialist in Operating Model, Cloud Management, IT Financial Management/Business Case and Capacity Planning. In his role, he helps enterprises fully understand the technical and financial benefits of operating model and infrastructure transformations. His career has spanned roles from directing worldwide projects to CTO/CIO, across multiple industries including financial services, media, publishing and online retail.

His experience includes IT-as-a-Service transformation, IT Service Management processes, managing overall distributed strategies, infrastructure management & chargeback, e-Commerce, application development life cycle, QA, network modeling, and capacity planning.