VCD 10.2.2 release on April 08 with several improvements in Tanzu Integration. In VCD release 10.2, We saw that, with vSphere with Kubernetes(Tanzu Basic), the providers could leverage improved governance, ensure availability, security. With Kubernetes, specific policies allow for improvised resource allocation and cost-control in effect. The tight coupling with NSX-T allows for automated Load balancing and networking policies for the created Kubernetes clusters.

We also acknowledged that Tanzu Kubernetes Clusters reduces the engineering/DevOps effort to manage Role-based Access control, CNI plugin in the Kubernetes cluster. The integration with VCD allows DevOps and developers to test and deploy the application through kubeconfig. Tenant Users can also scale worker nodes with 1-click. The VMware Cloud Director 10.2.2 released with some significant advancements for Tanzu Basic. This blog post covers the Network and Security isolation of guest Kubernetes clusters

The Network Isolation between different Tenant clusters is managed by VCD starting 10.2.2 and vSphere 7u1c/u2. The SNAT rules allow inbound access to all Kubernetes Clusters within the Customer organization. The Gateway firewall rule ensures the ingress access to each Kubernetes cluster within an organization. VCD manages these configurations, and The Gateway rules follow the lifecycle of the tenant organization’s Kubernetes policy. The Gateway Firewall rules and SNAT rules are auto-synchronized when the provider administrator reconnects the VCD to vSphere. The auto-synchronization guarantees automation of the network isolation for the customers’ guest K8s clusters. Figure-1 shows Network Isolation steps managed by VCD with Numbers 1 and 2.

The tenant isolation restricts access to the Kubernetes cluster from networks not part of the tenant organization. For this reason, the customers can use a jump box to access the cluster within the tenant organization. The tenant administrator can install the kubectl on the jump host to access their Kubernetes clusters

Exposing a Service on TKG cluster using External Network:

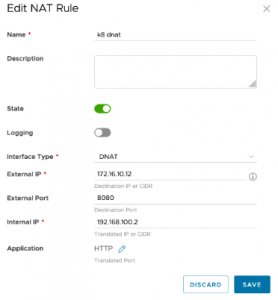

When the customer is ready to expose the service running on the Kubernetes cluster, they can follow the steps described in the following section:

- Fetch the IP address of the service using the kubectl get svc command (record External IP of your service). This IP address belongs to the Supervisor cluster’s Ingress IP address range.

- Select an available IP address from the External Network of the Organization VDC.

- Create a DNAT rule to map Kubernetes cluster’s Service to External Network IP address with choice of service port as shown in Figure 3

To summarize, the provider can provide network and security isolation, and customers can securely provision services by NAT rule on the Tier1 gateway of the customer Organization.

Further reading: