by: VMware Application Developer Abhishek Anand

The VMware HR-Legal IT team supports a lot of custom applications that help in fulfilling our core business requirements. These are monolith applications that sit on virtual machines (VM). In 2021, we embarked on a journey to modernize these applications to make them cloud native.

The challenge of monolithic architecture

The server-side application is a monolith—a single logical executable. Any changes to the system involved building and deploying a new version of the server-side application.

Modularity within the application is typically based on the features of the programming language (e.g., packages, modules). With time, the monolith grows larger as business needs change and new functionalities are added. It becomes increasingly difficult to maintain a good modular structure, making it harder to keep changes that should only affect a single module within the structure.

Even a small change to the application requires the entire monolith be rebuilt and deployed.

Every year during quarter end, there is a huge demand for reports in Take1, Wellbeing application or during new benefits announcement, huge traffic comes onto benefits site; because of which we would suffer few outages. To sustain this surge, we have to procure new VMs and scale up the entire application which would take a few days of effort.

How we solved it

- Containerize the application

Before containers, an application was deployed to each environment by updating the existing virtual machines. The deployment was slow and frequently failed due to transient timeout errors during the update process.

First, we minimized the amount of code changes during containerization. This reduced the complexity and allowed us to regression test before and after replatforming from a VM to containers. After the application is running in containers in production, we leveraged the benefits of containers to refactor and rearchitect the application. Since this is a “lift-and-shift” scenario, the operating system and libraries are defined by the existing application. So, our goal was to determine the best approach to incorporate these dependencies into a container. The applications required Linux, OpenJDK, Node, and WordPress, so we leveraged open source images from the VMware Tanzu® Application Catalog™. We created Dockerfiles inherited from the open source image and made modifications per the application requirements. See Figure 2.

- Automated CI/CD

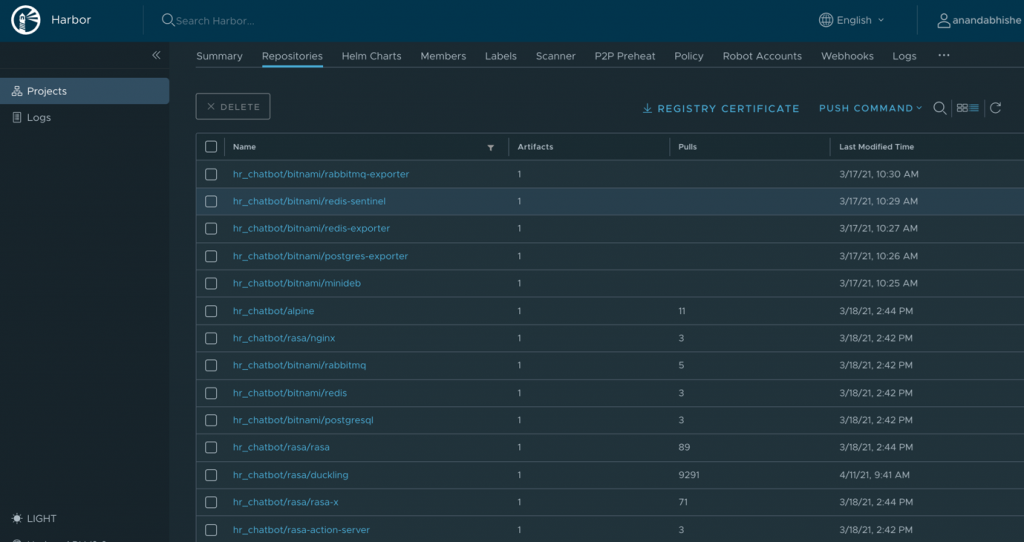

Once we built and tested the container locally, we pushed it to a registry. We created an organization on the internal container registry of VMware and used a private repository to hold all of the application’s container versions.

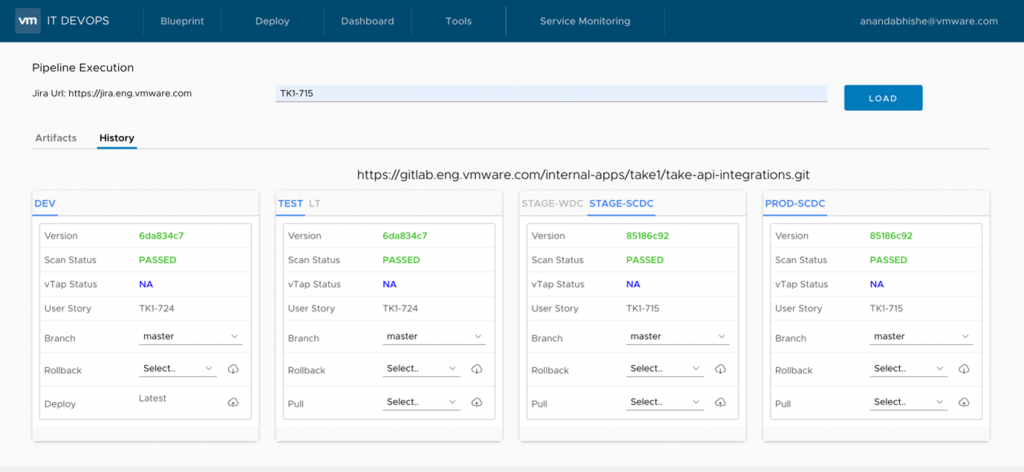

We used an internal portal of VMware (IT DevOps portal), developed via the clarity design system and VMware vRealize® Code Stream™ that enables seamless container deployments. The pipeline does a compile on the application, runs a Docker build on the Dockerfile with a version tag and then pushes it to the internal registry. Once pushed, the tool deploys the containers onto VMware Tanzu® Kubernetes Grid™. See Figure 3.

Figure 3. CI/CD pipeline

- Tanzu Kubernetes Grid

This is an enterprise-ready Kubernetes runtime that brings a consistent way to run Kubernetes across data centers, public clouds, and edge locations. Deploying to Tanzu Kubernetes Grid provides developers the following benefits:

- Scalability: software can be deployed for the first time in a scale-out manner across pods, and deployments can be scaled in or out at any time.

- Time savings: allow for pausing a deployment at any time and resuming it later.

- Version control: can update deployed pods using newer versions of application images and roll back to an earlier deployment if the current version is not stable.

- Horizontal autoscaling: allows Kubernetes autoscalers to automatically size a deployment’s number of pods based on the usage of specified resources (within defined limits).

- Rolling updates: lets updates to a Kubernetes deployment be orchestrated in “rolling fashion,” across the deployment’s pods. These rolling updates are orchestrated while working with optional predefined limits on the number of pods that can be unavailable and the number of spare pods that may exist temporarily.

- Monitoring and observability

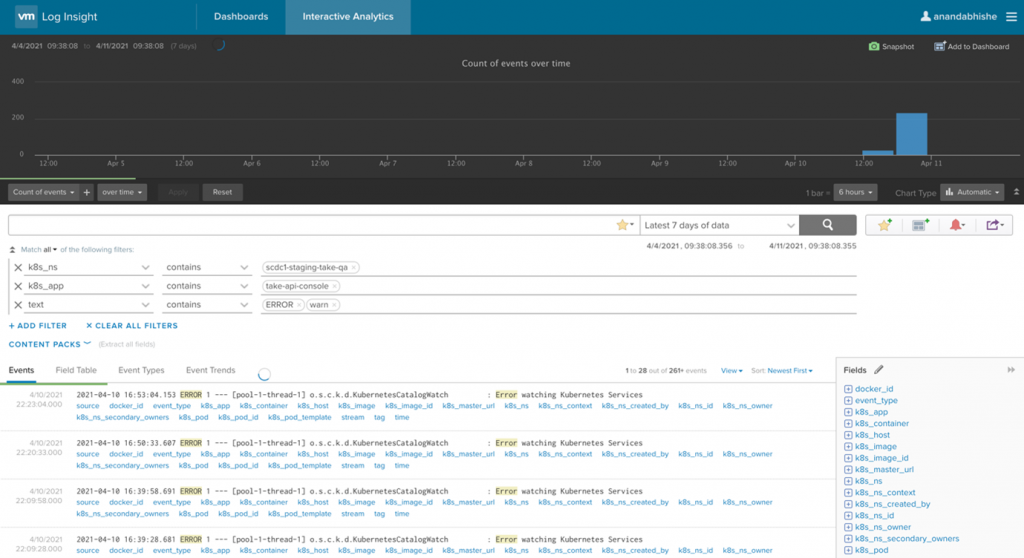

We used VMware vRealize® Log Insight Cloud™ for monitoring the container logs. It provides a brilliant dashboard to view all the events with filtering options so could setup alerts for any desired events. See Figure 4.

Figure 4. Log monitoring through vRealize Log Insight

VMware Tanzu® Observability™ by Wavefront provides in-depth monitoring of the applications running on Tanzu Kubernetes Grid. See Figure 5.

Figure 5. Tanzu Observability showing the Kubernetes namespace health

Octarin, the VMware open source Kubernetes dashboard, is used to check the health of Kubernetes pods, deployments, and services.

Great expectations fulfilled

Overall, we deployed more than 50 microservices and more than 100 pods across all environments.

Now, whether it’s quarter end reports in Take1/Wellbeing application or any big bang announcements in Benefits site, there is a straightforward way to scale up the resources within few seconds. Just head over to the microservices under heavy load and with a click, scale it up. All outages averted.

That’s all possible because of the platform provided by Tanzu Kubernetes Grid, which helped us keep our HR business running as usual, including but not limited to:

- Data subject rights (DSR)

- Take1 continuous education

- Benefits

- Wellbeing allowance app

- Wellbeing microsite

- Base pay calculator

VMware on VMware blogs are written by IT subject matter experts sharing stories about our digital transformation using VMware products and services in a global production environment. Contact your sales rep or vmwonvmw@vmware.com to schedule a briefing on this topic. Visit the VMware on VMware microsite and follow us on Twitter.