Should you use Eager Zero Thick?

For VMFS, maybe? but for vSAN probably not.

Ok, I’ll agree I probably owe an explanation of why.

A quick history lesson of the 3 kinds of VMDKs used on VMFS.

thin: Space is not guaranteed, it’s consumed as the disk is written to.

thick (Sometimes called Lazy Thick): Space is reserved by the blocks are initialized lazily, so VMFS has a zero’ing cost the first time a block is written

EZT or Eager Zero Thick: The entire disk is zero’d so that VMFS never needs to write metadata. This is required for shared disk use cases, as VMFS can’t coordinate metadata updates.

How are VMFS and vSAN different?

These virtual disk types have no meaning on vSAN. From a vSAN perspective all objects are thin (thin vs thick vs EZT makes no difference). This is similar to how NFS is always sparse, and reservations are something you can do (with NFS VAAI) but it doesn’t change the fact that it doesn’t actually write out zeros or fill the space like it did on VMFS. On VMFS this could be mitigated largely using ATS and WRITE_SAME (With further improvements in the works). I’ve always been dubious on this benefit given how many databases and applications would often write out and pre-allocate file systems in advance. There are likely some corner cases such as creating an ext4 file system is slower but you can generally work around that if you really care (mkfs.ext4 -E nodiscard).

While there may be benefits, modern all-flash arrays, VMFS6 improvements, VAAI have significantly lowered the overhead to thin VMDKs. Given that UNMAP/TRIM storage reclaim requires thin VMDKs, for most use cases I recommend thin be the default without a good justification otherwise.

Why Thin on vSAN

Having an EZT disk won’t necessarily improve vSAN performance the same way it does for VMFS. Pre-filling zeros on VMFS had the advantage of avoiding “burn-in” bottlenecks tied to metadata allocation.

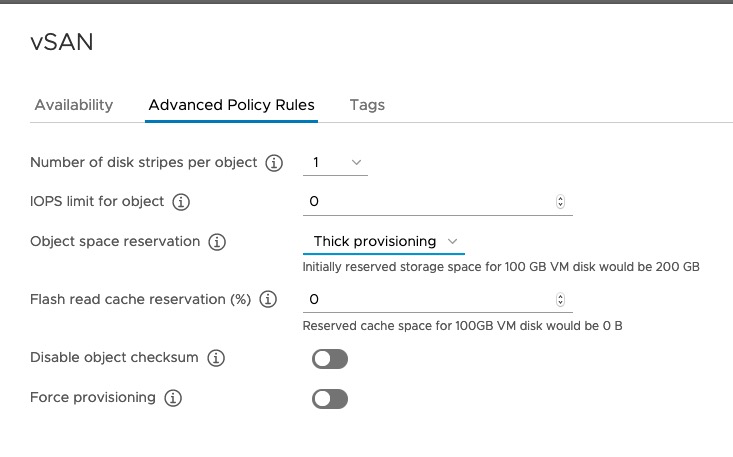

Why does vSAN with a disk type set to “thick” or “eager zero thick” behave as thick, despite having a thin vSAN policy? For legacy support reasons a VMDK disk typeset as thick will override the SPBM policy. If the VM should be using “Thick provisioning”, please apply a Storage Policy to the VM with the vSAN option “Object space reservation” set to 100 (Thick provisioning).

First off, vSAN has it’s own control of space reservation. the Object Space Reservation policy OSR=100% (Thick) or OSR=0% (Thin). This is used in cases where you want to reserve capacity and prevent allocations that would allow you to run out of space on the cluster. In general, I recommend the default of “Thin” as it offers the most capacity flexibility and thick provisioning tends to be reserved for cases where it is impossible or incredibly difficult to ever add capacity to a cluster and you have very little active monitoring of a cluster.

What about FT and Shared Disk use cases (RAC, SQL, WFC, etc)? This requirement has been removed for vSAN. For vSAN you also do not need to configure object space reservation too thick to take advantage of these functionalities. VMFS there may still be some benefits here due to how shared VMDK metadata updates are owned by a single owner (vSAN metadata updates are distributed so not an issue).

What do I gain by using Thin VMDKs as my default?

You save a lot of space. Talking to others in the industry 20-30% capacity savings. If combined with TRIM/UNMAP automated reclaim, even more space can be crawled back! This can lead to huge savings on storage costs. As a bonus, deduplication and compression work as intended only when OSR=0% and the default thin VMDK type is chosen.

Also if configured to auto-reclaim you lower the chances of an out-of-space condition, so this can increase availability. Eager Zero Thick or Thick VMDKs on vSAN are not going to reserve capacity in a way that prevents over commitment on vSAN, instead, it will simply use more capacity.

Expect a health alarm if you have set EZT or thick VMDKs stored on a vSAN datastore. It is considered a faulty configuration. KB 66758 includes more information about this. If you need to reserve capacity (for now) use the SPBM capacity reservation policy. Additionally, William Lam has a blog on this topic as well.

Have more questions about thick vs. thin? I’m on Twitter, @Lost_Signal, and would love to hear your questions and opinions.

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.