The virtual machine snapshot is a commonly used feature in the VMware vSphere environment.

One of the most common questions we get is, “What is the performance impact of VM snapshot creation/deletion on the performance of guest applications running inside the VMs?”

In this blog entry, we explore these performance aspects in VMFS, vSAN, and vVOL environments with a variety of workloads and provide recommendations.

Note: Read the paper VMware vSphere Snapshots: Performance and Best Practices for more information. The paper was updated November 8, 2021 to include the performance implications of snapshots on a vSphere/Kubernetes environment.

What is a snapshot?

A snapshot preserves the state and data of a VM at a specific point in time.

- The state includes the VM’s power state (for example, powered on, powered off, suspended).

- The data includes all the files that make up the VM. This includes disks, memory, and other devices, such as virtual network interface cards.

A VM provides several operations for creating and managing snapshots and snapshot chains. These operations let you create snapshots, revert to any snapshot in the chain, and remove snapshots. You can create extensive snapshot trees.

For more information on vSphere snapshots, refer to the VMware documentation “Using Snapshots to Manage Virtual Machines.”

Snapshot formats

When you take a snapshot of a VM, the state of the virtual disk is preserved, the guest stops writing to it, and a delta or child disk is created. The snapshot format chosen for the delta disk depends on many factors, including the underlying datastore and VMDK characteristics, and has a profound impact on performance.

SEsparse

SEsparse is the default format for all delta disks on VMFS6 datastores. SEsparse is a format similar to VMFSsparse (also referred to as the redo-log format) with some enhancements.

vSANSparse

vSANSparse, introduced in vSAN 6.0, is a new snapshot format that uses in-memory metadata cache and a more efficient sparse filesystem layout; it can operate at much closer base disk performance levels compared to VMFSsparse or SEsparse.

vVols/native snapshots

In a VMware virtual volumes (vVols) environment, data services such as snapshot and clone operations are offloaded to the storage array. With vVols, storage vendors use native snapshot facilities, hence vSphere snapshots can operate at a near base disk performance level.

In this technical paper, we discuss the VM snapshot performance when using different datastores supported in the vSphere environment.

Performance testing workflow

For each of the workload scenarios we considered, we use the VM performance without any snapshots as the baseline. With the addition of each new VM snapshot, we reran the benchmark to capture the new performance numbers. The workflow was as follows:

1. Run workload inside the test VM with no snapshots (baseline)

2. Create a snapshot of the test VM

3. Run workload inside the test VM

4. Repeat the above steps 2, 3, in a loop

Performance

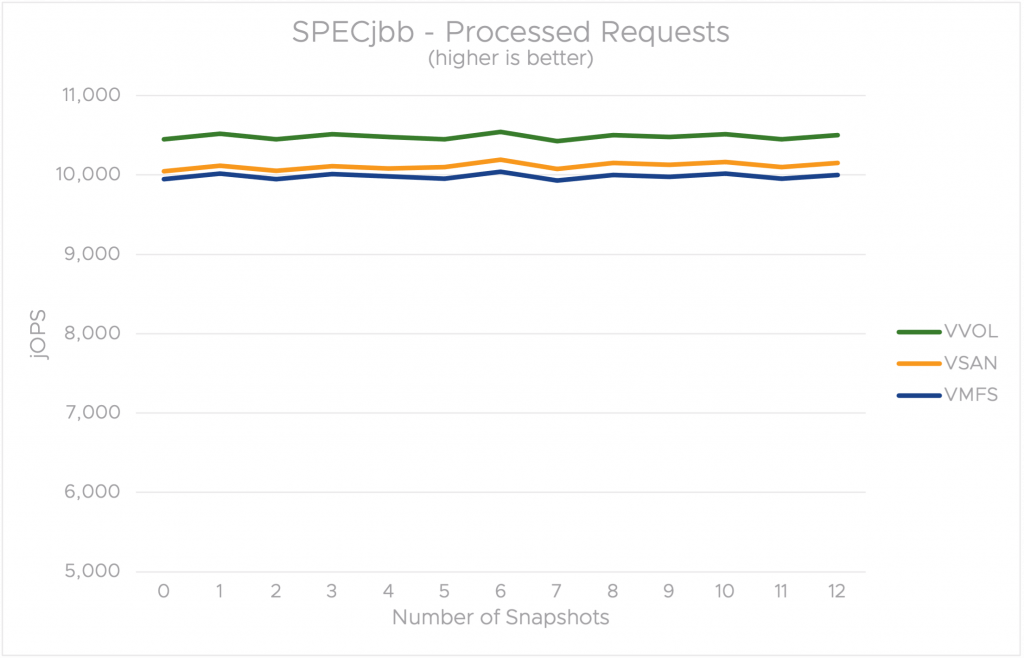

Our first experiments included two sets of FIO test scenarios with 100% of random 4KB I/Os and 100% of sequential 4KB I/Os. The third set of tests used a SPECjbb 2015 workload with no disk I/O component.

FIO workload parameters

- -ioengine=libaio -iodepth=32 –rw=randrw –bs=4096 -direct=1 -numjobs=4 -group_reporting=1 -size=50G –time_based -runtime=300 -randrepeat=1

- -ioengine=libaio -iodepth=32 –rw=readwrite –bs=4096 -direct=1 -numjobs=4 -group_reporting=1 -size=50G –time_based -runtime=300 -randrepeat=1

SPECjbb 2015 parameters

- JAVA_OPTS : -Xms30g -Xmx30g -Xmn27g -XX:+UseLargePages -XX:LargePageSizeInBytes=2m -XX:-UseBiasedLocking -XX:+UseParallelOldGC

- specjbb.control (type/ir/duration): PRESET:10000:300000

The baseline guest application performance without any snapshots is different on all three datastores; this is expected due to the differences with underlying hardware. The main focus of this study is to understand the impact on the guest application performance with snapshots.

The presence of the VM snapshot has the most impact on the guest application performance when using the VMFS datastore. As can be seen in Figures 1 and 2, FIO performance (random and sequential I/O) on VMFS drops significantly with the first snapshot.

The guest performance impact in the presence of snapshots on VMFS is due to the nature of the SEsparse redo logs. When I/O is issued from a VM with a snapshot, vSphere determines whether the data resides in the base VMDK (data written prior to a VM snapshot operation) or if it resides in the redo-log (data written after the VM snapshot operation) and the I/O is serviced accordingly. The resulting I/O latency depends on various factors, such as I/O type (read vs. write), whether the data exists in the redo-log or the base VMDK, snapshot level, redo-log size, and type of base VMDK.

In comparison to VMFS, we observe the presence of VM snapshots on a vSAN datastore has minimal impact on guest application performance for workloads that have predominantly sequential I/O. In the case of random I/O tests, similar to the VMFS scenario, we observe substantial impact on guest performance on vSAN.

Of all the three scenarios, the presence of VM snapshots has the least impact on guest performance when using vVOL, thanks to its native snapshot facilities. In fact, our testing showed the impact was nearly zero even as we increased the snapshot chain.

SPECjbb performance remained unaffected in the presence of snapshots (figure 3) on all the test scenarios. This is expected since it does not have any disk I/O component because there isn’t any I/O to the VM delta disks.

Impact of snapshot removal on guest performance

Deleting a snapshot consolidates the changes between snapshots and writes all the data from the delta disk to the parent snapshot. When you delete the base parent snapshot, all the changes merge with the base VM disk.

To understand the impact of VM snapshot consolidation/removal, we considered a FIO test scenario that included 16KB I/O sizes with a 50:50 mix of sequential and random I/Os.

As in the previous scenario, we increased the number of snapshots from 1 to 12 and captured guest application performance after the addition of each new VM snapshot. We then reversed the workflow by deleting the VM snapshots and captured the guest application performance after the removal of each VM snapshot.

Figure 4 includes two charts. The first chart shows the impact on guest application performance with the addition of each snapshot. As in the previous 100% random I/O scenario (figure 1), we see a significant impact on guest performance when running the 50% random I/O test on both VMFS and vSAN in the presence of snapshots. Once again, the guest performance on vVOL remains unaffected.

The second chart shows we’re able to recover the performance with each snapshot deletion on all the datastores. After all of the snapshots are deleted, the guest performance is similar to what it was prior to creating any snapshots. In fact, guest performance after snapshot remove is almost a mirror image of guest performance after prior to snapshot create.

Recommendations

We suggest the following recommendations to get the best performance when using snapshots:

- The presence of snapshots can have a significant impact on guest performance, especially in a VMFS environment, for I/O intensive workloads. Hence, the use of snapshots only on a temporary basis is strongly recommended and our findings show that the guest fully recovers the performance after deleting snapshots.

- Using storage arrays like vVOL, which use native snapshot technology, is highly recommended to avoid any guest impact in the presence of snapshots.

- If using vVOL is not an option, using vSAN over VMFS is recommended when using snapshots.

- Our findings show that performance degradation is higher as the snapshot chain length increases. Keeping the snapshot chain length smaller whenever possible is recommended to minimize the guest performance impact.

To learn more about VMware Snapshots, full details of the testbeds used, results of other workloads we considered, and impact VM snapshot on other provisioning operations such as VM clone, read the paper.

Notes:

- Our results are not comparable to SPEC’s trademarked metrics for this benchmark. Learn more about SPECjbb 2015.

- The snapshot format used for all delta disks on NFS datastore is VMFSsparse. Hence, the guest performance loss on NFS datastore in presence of snapshots is expected to be similar to the loss seen on VMFS datastore. Our recent performance testing confirmed this.

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.