As a VMware {code} Coach, I wanted to share a situation I recently experienced where I responded to a request quickly and then had to reevaluate & revise my approach.

A customer was looking to map some Linux virtual disks on a vVol datastore to their respective Pure Storage FlashArray volumes.

Looking at the request, I quickly determined that the new mechanism my colleague Cody Hosterman blogged about with the release of vSphere 7 wasn’t going to be sufficient. This was because the customer was on an older version of vSphere, and the new functionality wasn’t available to them.

Quickly putting a script together against my minimally used lab, I sent something to my customer.

It ran without a hitch in my environment, largely because my array was mostly empty.

My first attempt

I decided to go another route to accomplish some of the same things with a more legacy vSphere (6.5 in this case) approach. Some of the things I needed to accomplish included:

- Determine which vmdks reside on a vVol datastore

- Determine which of those are attached to a given VM

- Determine which specific VM hard disk it is, and the SCSI ID

- Map that SCSI back to a VM’s hard disk, and then retrieve the vVol’s name on FlashArray

My code was a little crude, and not specifically efficient.

I used the ‘lsscsi‘ command in my CentOS guest to return the SCSI ID for comparison.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

# Setup the script's parameters [CmdletBinding()]Param( [Parameter(Mandatory=$True)][string]$VM, [Parameter(Mandatory=$True)][string]$GuestUser, [Parameter(Mandatory=$True)][string]$GuestPassword, [Parameter(Mandatory=$False)][boolean]$Table ) # Retrieve all datastores that are vVols $VvolDstore = Get-Datastore | Where-Object {$_.Type -eq "VVOL"} # Retrieve ALL disks, regardless of VM that reside on those datastores $Disks = Get-HardDisk -Datastore $VvolDstore # Configure the output fields for the hard disks $vmname = @{N='VM';E={$_.Parent.Name}} $scsiid = @{label="ScsiId";expression={$hd = $_;$ctrl = $hd.Parent.Extensiondata.Config.Hardware.Device | where{$_.Key -eq $hd.ExtensionData.ControllerKey}"$($ctrl.BusNumber):$($_.ExtensionData.UnitNumber)"}} $vvolid = @{label="vVolUuid";expression={$_ | Get-VvolUuidFromHardDisk}} $favolume = @{label="FaVolume";expression={get-faVolumeNameFromVvolUuid -vvolUUID ($_ | Get-VvolUuidFromHardDisk)}} # Return all of the disks that are attached to the VM, and then format the output. $VvolDisks = Get-VM $VM | Get-HardDisk | Where-Object {$_.Filename -in $Disks.Filename} | Select $vmname, Name, CapacityGB, @{N='SCSIid';E={$hd = $_;$ctrl = $hd.Parent.Extensiondata.Config.Hardware.Device | where{$_.Key -eq $hd.ExtensionData.ControllerKey}"$($ctrl.BusNumber):$($_.ExtensionData.UnitNumber)"}}, Filename, $vvolid, $favolume, @{N='DeviceInfo';E={$hd = $_;$ctrl = $hd.Parent.Extensiondata.Config.Hardware.Device | where{$_.Key -eq $hd.ExtensionData.ControllerKey} # Using lsscsi to determine the SCSI ID in CentOS - This may be different for different versions of Linux $GuestScript = "lsscsi -b -s 0:"+$ctrl.BusNumber+":"+$_.ExtensionData.UnitNumber+":0" $GuestDevice = Invoke-VMScript -ScriptText $GuestScript -Guestuser $GuestUser -GuestPassword $GuestPassword -ScriptType "bash" -VM $_.Parent | Select -ExpandProperty scriptoutput "$($GuestDevice)"}} # If Table is $true, then output as a table. If ($Table -eq $true) { $VvolDisks | FT } else { $VvolDisks } |

The above script HardDiskToVvol.ps1 wasn’t efficient because it enumerated all of the vmdks on all vVol datastores, and then matched those that were attached to the specific VM.

Room for improvement

Upon hearing back from the customer, I learned that the script took a significant amount of time in their environment. Why was it so slow? Surely it shouldn’t have been.

After digging a little deeper, I realize the error in my ways and adjusted my approach.

In my test/lab environment, the process wasn’t specifically slow, but keep in mind that I only had a few vVols. In an environment with a significant number of vmdks on a vVol datastore, it could be quite slow.

So I then approached it a little differently:

- Query the VM for the individual vmdks

- Determine if those vmdks resided on a vVol datastore

- Determine which specific VM hard disk it is, and the SCSI ID

- Map that SCSI back to a VM’s hard disk, and then retrieve the vVol’s name on FlashArray

By only looking at the individual vmdks attached to the specific VM, the process is much faster, especially in cases where there are a significant number of vmdks residing on a vVol datastore. I also added the ability to prompt for VM Guest Credentials for the purpose of performing the process of invoking ‘lsscsi‘ in the guest.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 |

# Configure our Parameters [CmdletBinding()]Param( [Parameter(Mandatory=$True)][string]$VM, [Parameter(Mandatory=$False)][string]$GuestUser, [Parameter(Mandatory=$False)][string]$GuestPassword, [Parameter(Mandatory=$False)][boolean]$Table ) # If a username/password have not been provided, prompt for them If ((-Not $GuestUser) -or (-Not $GuestPassword)) { $VMCred = Get-Credential -Message "Enter credentials for $VM" } else { $password = (ConvertTo-SecureString $GuestPassword -AsPlainText -Force) $VMCred = New-Object System.Management.Automation.PSCredential -ArgumentList ($GuestUser, (ConvertTo-SecureString $GuestPassword -AsPlainText -Force)) } # Setup the array for the custom data collection $DiskOutput = @() # Return the disks that are attached to the VM $VMHDS = Get-HardDisk -VM $VM # Enumerate each of the vmdks that are attached to the VM Foreach ($VMHD in $VMHDS) { # Get the current datastore $Datastore = Get-Datastore -Id $VMHD.ExtensionData.Backing.Datastore # If the datastore that backs the current vmdk is of type VVOL, then let's do our work If ($Datastore.Type -eq "VVOL") { # Get the Pure Array from the vVol Datastore if possible $FaName = Get-PfaConnectionOfDatastore -Datastore (Get-Datastore -Id $VMHD.ExtensionData.Backing.Datastore) # Get the controller for the current disk $CTRL = $VMHD.Parent.ExtensionData.Config.Hardware.Device | Where {$_.Key -eq $VMHD.ExtensionData.ControllerKey} # Setup the script that is used to pull guest os information for the current disk # This example executes 'lsscsi' to return CentOS guest information. # Adjust as necessary for different flavors of Linux $GuestScript = "lsscsi -b -s 0:"+$CTRL.BusNumber+":"+$VMHD.ExtensionData.UnitNumber+":0" # Execute the script in the guest and store the results in $GuestDevice $GuestDevice = Invoke-VMScript -ScriptText $GuestScript -GuestCredential $VMCred -ScriptType "bash" -VM $VMHD.Parent | Select -ExpandProperty scriptoutput # Create the custom object to store our data $PSObject = New-Object PSObject -Property @{ VMName = $VMHD.Parent.Name HDName = $VMHD.Name CapacityGB = $VMHD.CapacityGB ScsiId = "$($CTRL.BusNumber):$($VMHD.ExtensionData.UnitNumber)" VvolId = $VMHD | Get-VvolUuidFromHardDisk FaVolume = Get-faVolumeNameFromVvolUuid -vvolUUID ($VMHD | Get-VvolUuidFromHardDisk) -flasharray $FaName DeviceInfo = $GuestDevice } # Add the current record to the DiskOutput array $DiskOutput += $PSObject } } # Display as a Table if desired If ($Table -eq $true) { $DiskOutput | FT } else { $DiskOutput } |

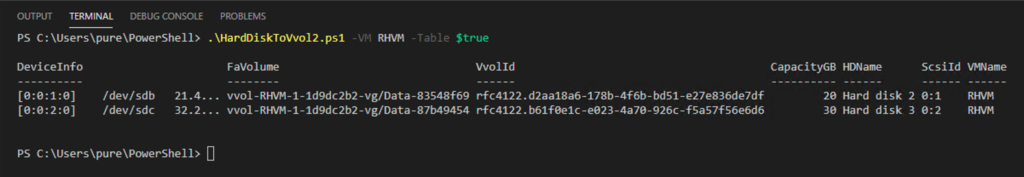

The resulting output looks something like this:

In a very large environment the difference can be very significant. Consider the first script being run against an environment with hundreds of vmdks residing on a vVol datastore. This would put each of the hundreds of vmdks in an array, then have to check the VM’s vmdk’s against that list.

The HardDiskToVvol2.ps1 script is more efficient because it uses the properties of the individual disks and their datastore backing, rather than querying datastores for all the vmdks and only selecting those connected to the requested VM.

The second script simply checks the vmdks, determines if they are on a vVol backed datastore, and then performs the same operations. In my example, the VM only has 2 vmdks that meet this criteria. The second script runs significantly faster because the properties of only two vmdks, and their datastore backings.

Basically, script 1 was a Saturday night quick script that was run against a mostly bare environment. Script 2 has a bit more of a larger scale & optimized approach that should behave the same in any environment.

While my first attempt met the need, always look for opportunities to streamline and optimize code.

The above scripts are also on my site: https://www.jasemccarty.com/blog/powershell-match-linux-vmdk-on-vvols-to-flasharray-volume/