For many organizations, Kubernetes adoption began as a technical initiative, but scaling it into a reliable, enterprise-grade platform often proved far more difficult than expected. What started as innovation quickly became complex with:

- Rising operational overhead

- Fragmented environments

- Security, compliance, and skillset gaps

- Slower time-to-market

At the same time, the stakes are increasing. AI initiatives, data-intensive applications, and digital customer experiences now depend on infrastructure that is not just scalable – but predictable, secure, and efficient. According to the latest State of Platform Engineering Report1, 64% of platform engineers identify Kubernetes as a primary focus area for achieving automated, reliable, and standardized application deployment.

With VMware Cloud Foundation (VCF), and the evolution of its VMware vSphere Kubernetes Service (VKS) component, the conversation has shifted from just infrastructure management to enabling business outcomes.

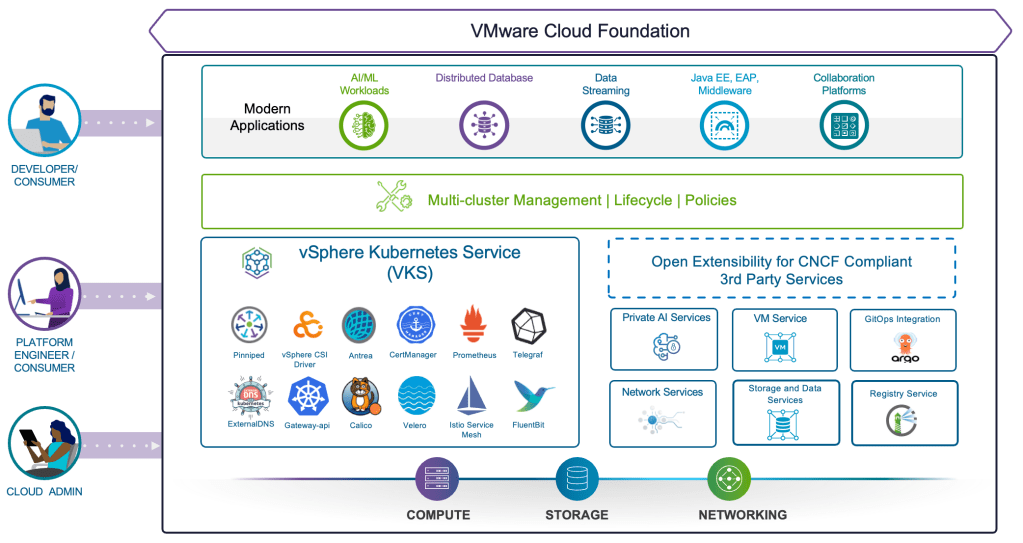

VCF addresses key priorities by providing a unified platform for containers and virtual machines with a built-in, CNCF-certified Kubernetes runtime delivered through its VKS component. VKS enables platform engineers to deploy and manage Kubernetes clusters while leveraging a robust set of cloud services included in VCF, as well as CNCF conformant third-party services (Figure 1). VKS is among the first Kubernetes certified AI conformant platforms that also simplifies multi-cluster management2, empowering enterprises to confidently run AI and other modern workloads.

The CIO Imperative: Reduce Complexity, Increase Velocity

CIOs today face a dual mandate – ensure security, governance, control and compliance, while also driving innovation faster – on a flat to decreasing budget.

Historically, these goals have been at odds. However, with lower total cost of ownership (TCO), faster time-to-value, better security, and a simplified operational experience, VKS is fast becoming the Kubernetes runtime of choice for modern applications. To help maximize investment returns, VKS in VCF 9.1 will deliver three major benefits:

(a) Enhanced scale and performance to support business critical demands

(b) Improved operational efficiency to reduce cost and complexity

(c) Built-in security and compliance by design

VCF 9.1: Kubernetes Enhancements At A Glance

VKS in VCF 9.1 will deliver enhancements across three critical pillars:

- Scale & Performance: 500 clusters per control plane, up to 70% faster cluster provisioning and 75% faster upgrades, multi-network support

- Operational Efficiency: Intelligent node pool placement, multiple clusters per zone, distributed transit gateway

- Security & Compliance: Automated secret injection & granular access controls

Enhanced scale and performance to support business critical demands

Whether it’s peak retail traffic, global service delivery, or large-scale AI workloads, infrastructure must respond instantly without introducing instability.

In VCF 9.1, VKS will enable:

- Rapid provisioning, reducing deployment times by up to 70%

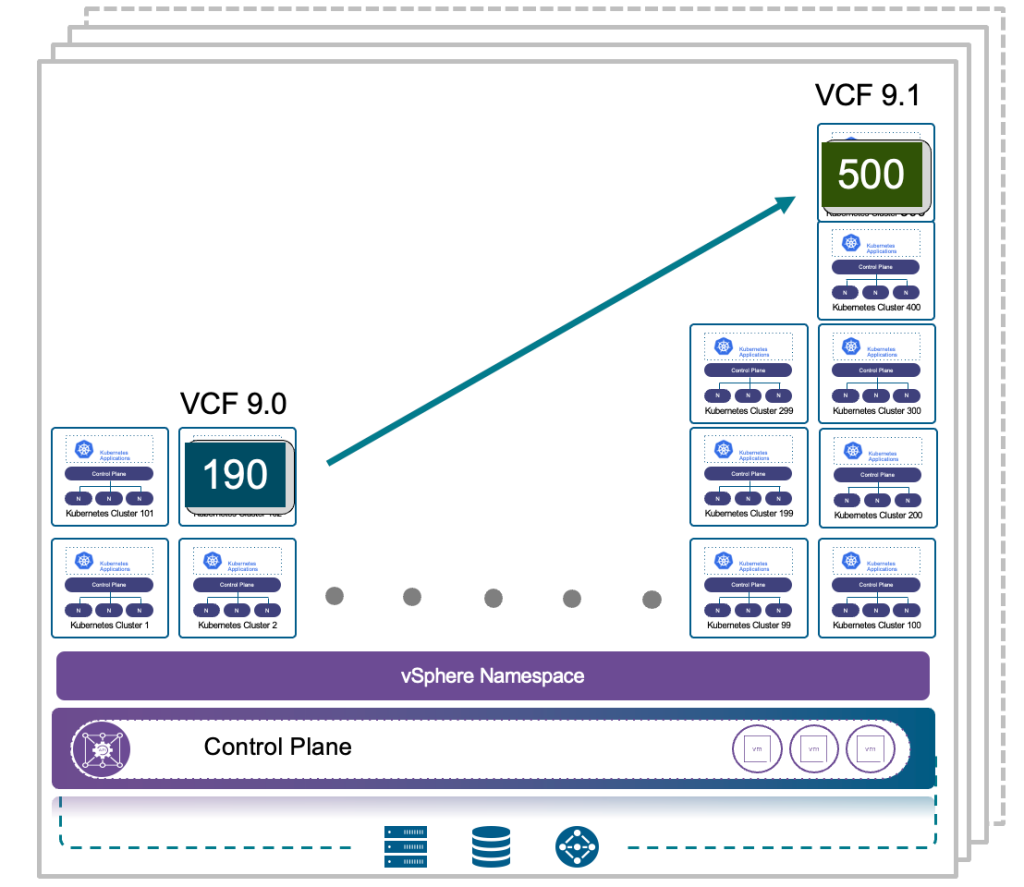

- Massive scalability, supporting up to 500 clusters within a single control plane

- Higher Performance, isolating critical applications for better quality of experience

Business benefits:

Faster rollout of new services, improved customer experience during peak demand, and the ability to support modern workload demands without re-architecting infrastructure.

Platform Engineering benefits:

The modern application landscape spans a diverse performance spectrum, ranging from high-bandwidth or latency sensitive to GPU intensive AI workloads. Organizations seek stronger isolation, reduced blast radius, or customized cluster requirements driving the need for higher volume of clusters.

Figure 2: VKS will support up to 500 workload clusters per control plane instance for better workload isolation and significantly smaller attack surface.

For larger environments, multiple control plane instances can be deployed and managed centrally through VCF Automation (VCFA), enabling horizontal scalability while maintaining operational consistency.

- Sudden surges in demand that are common in ecommerce applications during holiday shopping season, streaming video, or financial applications during market volatility, require infrastructure that can react in real time. In addition, organizations often struggle to rapidly stage mirror environments to test for production deployment. To help customers meet these demands, VKS will accelerate the operational velocity by deploying new clusters up to 70% faster and slashing upgrade windows by up to 75%.

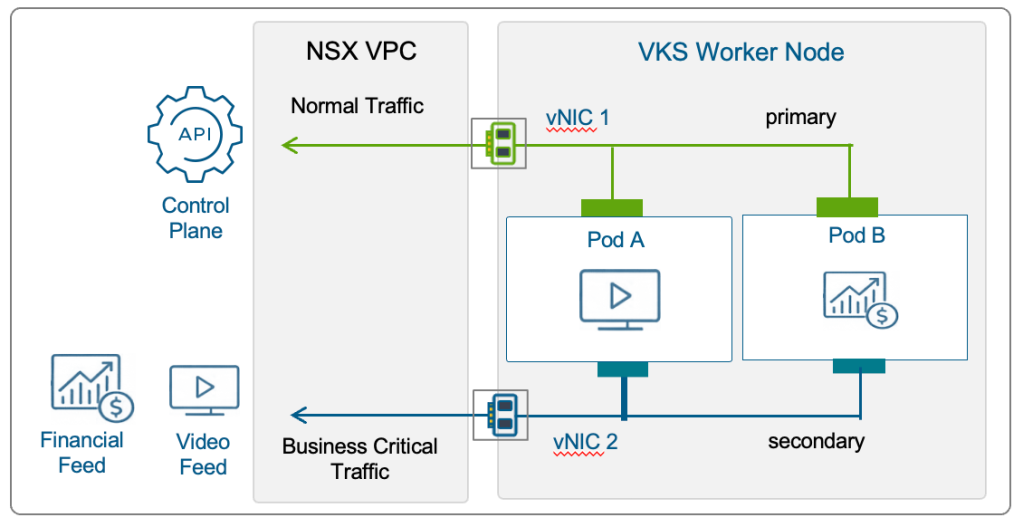

- To provide a better quality of experience for bandwidth-intensive streaming applications, latency-critical financial services, or regulated applications requiring classified access, VKS in VCF 9.1 will introduce advanced networking capabilities. Cluster nodes can be deployed with multiple vNICs, enabling traffic isolation at the node level (Figure 3). This allows separation of application, storage, and management traffic, or dedicating network paths for latency-sensitive or high-throughput workloads improving performance, consistency, and operational control.

Improved operational efficiency to lower the cost of innovation

One of the biggest hidden costs in enterprise IT is operational friction.

VKS in VCF 9.1 will benefit from both Kubernetes-specific enhancements and broader platform capabilities introduced in VCF:

- Intelligent Node Pool Placement, using vSphere’s Distributed Resource Scheduler algorithm, eliminating infrastructure bottlenecks that slow down deployment

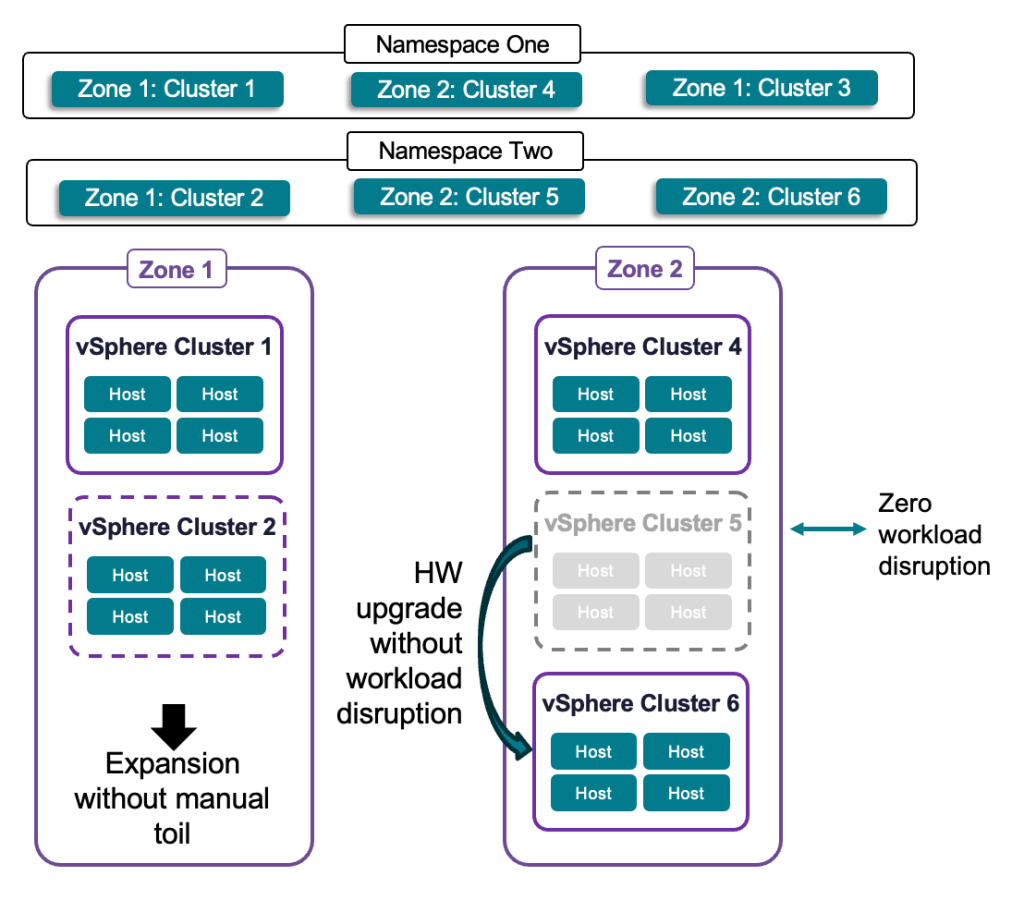

- Multiple Clusters per Zone, enabling non-disruptive hardware lifecycle management and scaling without manual toil

- Distributed Transit Gateway, simplifying network onboarding for faster application deployment

Business benefits:

Organizations achieve faster time-to-value by accelerating innovations from initial concept to production. In addition, applications stay online and profitable during service migration and ongoing hardware lifecycle management reducing downtime costs.

Platform Engineering benefits:

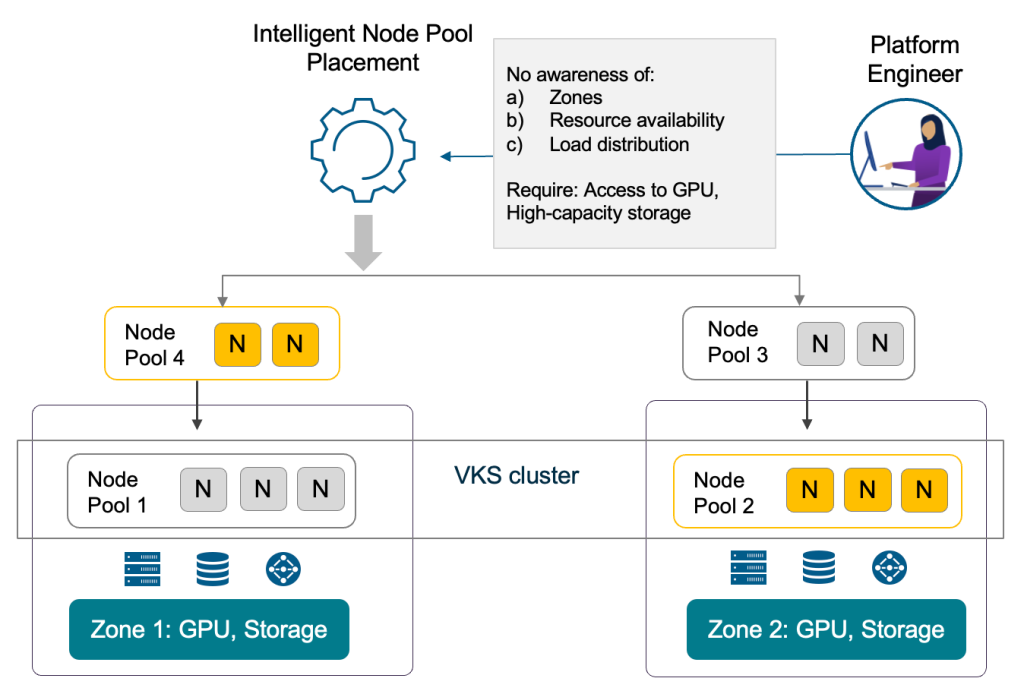

In the workload deployments node pool placement is a critical but often complex task. Platform Engineers have to consider a wide range of applications like AI apps needing GPU access, streaming apps requiring high-capacity storage, e-commerce apps that require high availability. But Platform Engineers should not be burdened with the deep infrastructure domain expertise required to navigate specialized resource access like GPU access, high-capacity storage requirements, zone awareness, resource availability across zones, load distribution, and failure constraints tied to high-availability (HA) – demanded by these modern workloads.

- Using intelligent node pool placement VKS will reduce the burden on Platform Engineers to possess deep infrastructure domain expertise (Figure 4). This automation will offer flexibility without deployment fragmentation giving Platform Engineers a consistent, predictable, and reliable experience. These enhancements significantly reduce friction in deploying workloads while eliminating placement failures and hard to debug placement issues.

- With support for multiple clusters into a single zone, Cloud Admins can replace, retire, or upgrade hardware without impacting application availability or disrupting the ‘desired state’, giving Platform Engineers a peace of mind (Figure 5). This abstraction will also allow the infrastructure to respond dynamically to escalating compute and storage needs, ensuring that scaling up doesn’t require a complex redesign of the environment.

- Platform Engineers will be able onboard new workloads faster with better network performance using Distributed Transit Gateway (DTGW), which provides an easier and consistent experience to connect workloads to the switch fabric. DTGW also improves latency and scale since the ESX host connects directly to the switch fabric instead of requiring a full NSX edge cluster.

Tighter security and compliance that is built-in and not bolted-on

With performance and scale addressed, the next critical milestone is to address security and compliance. Security in Kubernetes environments is often fragmented, requiring multiple tools and manual processes to manage secrets and enforce access policies.

VKS in VCF 9.1 will simplify this by embedding security controls into the platform:

- Integrated secret management workflows reducing manual configuration

- Fine-grained access controls enable least-privilege enforcement

- Consistent policy enforcement improves auditability and compliance

This will allow platform teams to automate secret handling and enforce access boundaries consistently across clusters, reducing operational risk and improving compliance readiness.

Business benefits:

Organizations will benefit from a reduced attack surface and risk of data breaches, ensuring that critical infrastructure remains protected against evolving threats. This unified approach inherently builds a stronger compliance posture through consistent policy enforcement, providing greater confidence in deploying regulated and sensitive workloads.

Platform Engineering benefits:

- Customers can achieve a more resilient security posture through a simplified automated way to inject secrets as a part of the deployment (Figure 6). By ensuring sensitive data is handled securely without manual configuration, this enhancement will strengthen overall security, improve auditability, and provide a framework to manage secrets consistently across all workloads.

- Platform Engineers can also configure granular policies for workloads to access secrets, reducing the attack surface and ensuring only authorized workloads gain access to secrets. This will help customers meet compliance and regulatory requirements.

Container as a Service in VCF 9.1

VCF 9.1 will introduce Container Service runtime delivered through VCF Automation with complete lifecycle management. This simplified container runtime will execute directly on ESX without cluster overhead, delivering workload isolation and resource efficiency. The VCF platform will fully automate scheduling, isolation, performance optimization, and upgrades. When the application architecture evolves, the UI will generate consistent YAML for a smooth transition to VKS clusters – offering a gentle on-ramp from simple container deployments to full Kubernetes capabilities.

About VKS 3.6 Release

While being part of the same integrated cloud platform software, release cycles for the VKS component are decoupled from the VCF release schedule, with three VKS updates per year. This component specific release schedule was specifically designed to ensure seamless alignment with upstream CNCF Kubernetes releases. With the February’s shipment of VKS 3.6, customers can provision fully conformant clusters leveraging the latest Kubernetes version 1.35. For better planning VKS 3.6 also enables customers to deploy and manage Kubernetes versions 1.33 and 1.34 alongside the latest release.

To learn more about VKS

VKS web page: Learn more about VKS

VKS 3.6 Release Blog: Overview of the most important features and functionality

VKS 3.6 Release Notes: Full details on features, fixes, and supported configurations

Container as a Service in the blog: Accelerate, Streamline, and Control Your Self-Service Private Cloud with VCF 9.1

Blogs Coming Soon

- Solving High-Demand Kubernetes Networking in VCF 9.1 with Multi-Network Support: How to enable multiple networks in your VKS clusters

- Faster Kubernetes Cluster Deployments and Upgrades: How VCF 9.1 accelerates day 2 operations

1 2025 State of Platform Engineering Report Volume 4

2 VKS multi-cluster management in VCF 9.0.1

Discover more from VMware Cloud Foundation (VCF) Blog

Subscribe to get the latest posts sent to your email.